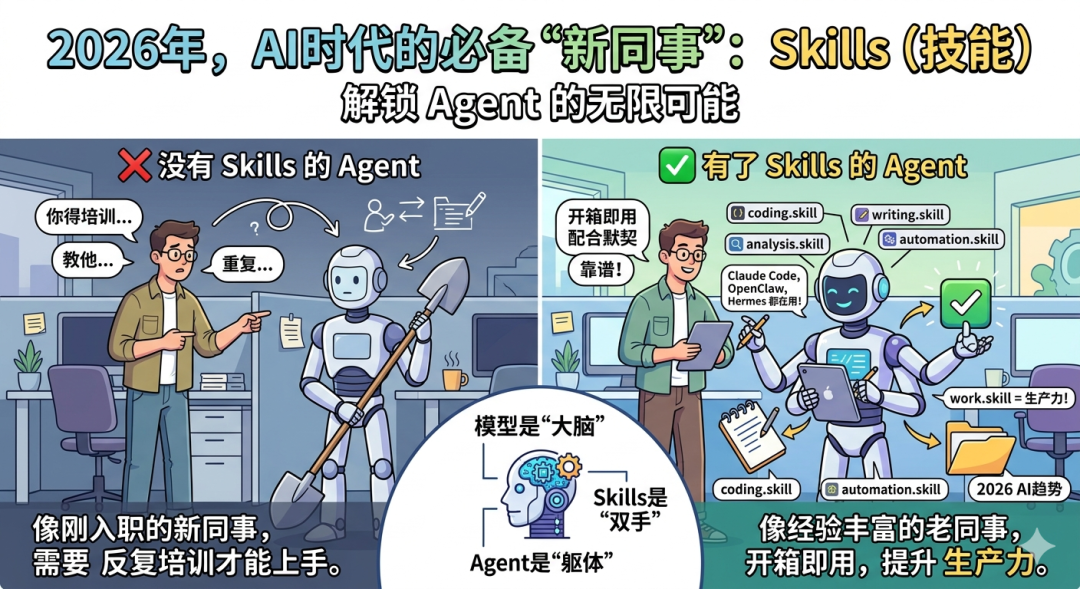

Happy Horse is an online creation workstation that seamlessly integrates the world's top AI video and AI image generation models. Relying on the 10 billion parameter scale Transformer architecture model, the platform revolutionizes the “single-step forward delivery” technology - not only can it generate cinematic video based on text or images, but also generate highly matched ambient sounds, dialog and action sound effects in one go without relying on any independent audio pipeline. The Transformer architecture revolutionizes "one-step forward delivery" - not only generating cinematic motion video from text or images, but also generating highly matched ambient sound, dialogue and action sound effects in one go without relying on any separate audio pipeline.

In addition to its self-developed native audio and video models, Happy Horse also integrates the Kling 3.0 (for multi-camera continuous narrative), Veo 3.1 (for broadcast-quality short films), GPT Image 2 (supports high-precision text image rendering), Nano Banana Pro (focuses on absolute consistency locking of character traits), Seedream 5.0 (4K native direct out), and Flux 2 Pro (10-second rendering) and many other cutting-edge engines in the industry. Users do not need to configure a high-performance graphics card or install any local software, and can experience cross-model, full-link audio/video and digital asset production through a browser. Whether it's batch iteration of e-commerce product images, 3D asset setup for games, or production of a virtual digital person broadcasting a short video, Happy Horse provides a one-stop, efficient, and zero-threshold content production service for creators.

Function List

- Single-step native audio/video synchronizationBased on the 15 billion parameter model, it automatically generates synchronized audio (including environmental background sound, character dialogues and special action sound effects) fully aligned to the screen while generating high-quality video, bidding farewell to the workflow of step-by-step production of video and audio.

- Aggregation of top AI models across the ecosystemThe workflow of Happy Horse is seamless, with a single console that freely invokes leading engines such as Happy Horse's self-developed Big Model, Kling 3.0, Veo 3.1, GPT Image 2, Nano Banana Pro, and more.

- Perfect character trait lock (Nano Banana Pro): It supports uploading 4 to 8 character reference drawings, locking the character's face identity like a hard physical constraint under various new pose, clothing and perspective generation conditions, and realizing zero-conformity three-view and emoticon packet design.

- High-precision text generation and typesetting (GPT Image 2): Provides image text rendering capability with up to 99% accuracy (compatible with Chinese, Latin, etc.), perfect for accurately generating images with specified spelling text on posters, signboards, and clothes.

- Advanced Motion Migration (Motion Control)The program can be used to create natural and smooth professional-grade dance or action movies by accurately extracting the physical laws and movement bones in the video footage, and then “transplanting” them onto a static single photo of a person with a single click.

- Mouth synchronization with virtual digital human dialogue: Upload any portrait photo with facial features and combine it with text or audio input to generate a dynamic multi-character dialog video with lips that perfectly match the voice.

- Cinematic Multi-Camera Narrative with Broadcast Quality (Kling & Veo)Render high dynamic range broadcast-quality clips with spatial stereo sound using Kling 3.0, or generate multi-camera footage up to 15 seconds in length with Veo 3.1.

- Extremely Clear 4K Direct Out with Extremely Fast Rendering: Integrates the Seedream 5.0 engine to natively output 4K images with no loss of detail; and supports the Flux 2 Pro Extreme Engine, which outputs high-quality images in less than 10 seconds to cope with high-volume variant testing.

- Zero-configuration full-cloud pure experience: Runs entirely on the web browser side, with zero hardware requirements on the local computer. All generated content is watermark-free and supports direct high-speed download in native format.

Using Help

I. Introduction of newcomers and preparation of the working environment

Welcome to the Happy Horse platform! This platform is dedicated to bringing industrial-grade AI rendering capabilities directly to every creator.

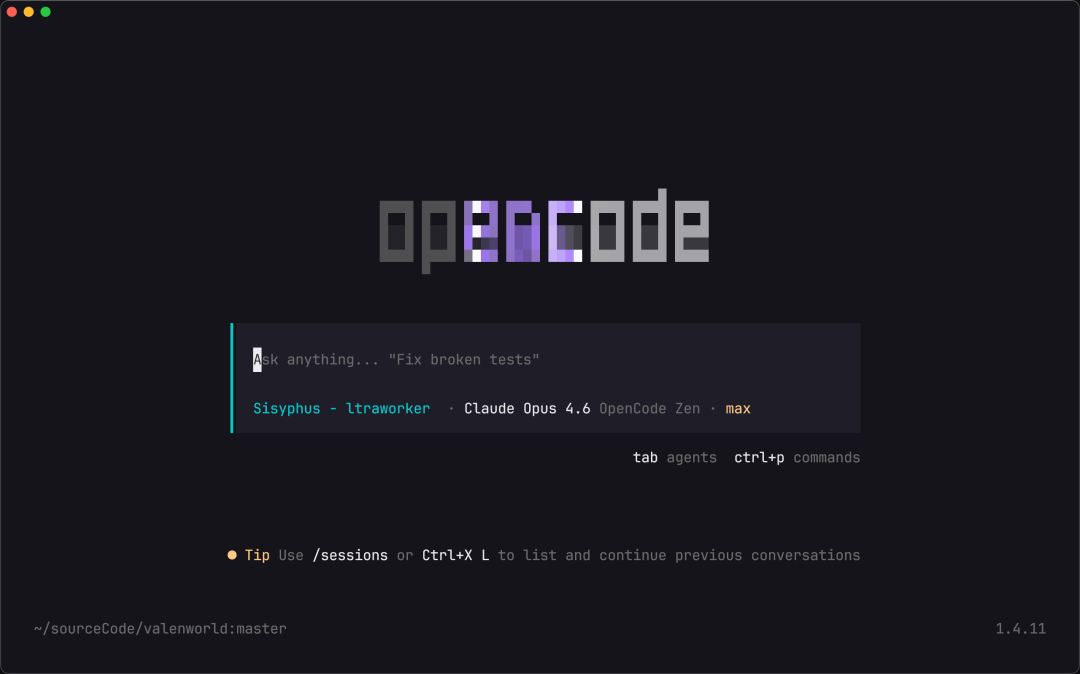

- Direct access without installation: Happy Horse is designed with a purely cloud-based architecture, which means you don't need to buy expensive discrete graphics card (GPU) configurations or download gigabytes of local deployment packages (such as Stable Diffusion and other cumbersome environments). Please visit our official website directly from your computer using any major browser (Chrome or Edge recommended).

- Unified workbench layoutAfter registering and logging in, you will enter the core workbench. The interface is divided into three main functional areas: the left sidebar is the “Multi-Engine Switching Navigation Bar” (where you can switch between video and image generation models with one click), the center area is the “Text Cue and Material Upload Area”, and the right side is the “Resolution The center area is the ”Text Cue and Clip Upload Area", and the right side is the "Resolution, Aspect Ratio, and Professional Parameters Settings Panel". All your digital assets are automatically synchronized and stored in the cloud.

II. Core Functions Explained: Generate AI Video Containing Native Audio (Happy Horse Core Model)

The biggest technological breakthrough of Happy Horse's self-developed model is the “Audio-Visual Isomorphic Rendering”. This makes your videos come with a natural ambient sound track.

- Step 1: In the left model navigation bar, click and select “Happy Horse Video”.

- Step 2: Write the Picture and Sound Prompt (Prompt): In the text box in the center, enter a natural language description. You can describe not only the picture, but also the sound. For example, “A brown stallion gallops merrily through a dewy morning meadow, the crisp sound of hooves echoing, with early morning birdsong in the background. Cinematic lighting, 8k resolution.”

- Step 3: Enable Native Audio Synchronization: In the list of features below the input box, make sure the “Enable Native Audio” option is checked. The underlying algorithm will then feed your text commands into both video and audio! Transformer Decoder.

- Step 4: Adjusting the Parameter Configuration: In the right panel, select the aspect ratio according to the social platform where you will publish the video (e.g. 16:9 for web-based landscape, 9:16 for short video platforms).

- Step 5: Render and Save: Click “Generate”. The system will spit out the MP4 video and the corresponding stereo audio track in one forward pass. You can click play in the center preview window to check whether the lip-sync and special effects sound fit the picture, and then click the button at the bottom right corner to download it to local without watermark.

III. Core functionality in detail: construction of an absolute consistency gallery for characterization (Nano Banana Pro)

For game artists, novel tweeters or comic book creators, the biggest pain point of AI drawing is that “the main character looks different” every time. With the Nano Banana Pro engine, the problem of character identity drift can be perfectly solved.

- Step 1: Switch to the “Image Generation” module in the left navigation bar and select the “Nano Banana Pro” engine from the drop-down menu.

- Step 2: Upload the baseline identity reference map: In the Reference Images area, upload 4 to 8 photos of the character whose facial and physical features you want to target. These photos should ideally include different views of the character (e.g. front, side). The system will extract the character's precise physical bone points and identity vectors in the background.

- Step 3: Define New Positions and Scenarios: Once the feature is locked, you simply describe the new plot action or costume in the Prompt Word text box. For example, “This character is walking in the rain in a modern city with an umbrella, wearing a black trench coat and cyberpunk neon lights.”

- Step 4: Batch Material Generation: Set the desired size on the right side (the engine supports up to 11 scale sizes straight out). Click Generate. The resulting image will be constrained by the laws of physics, 100% keeping the original character's facial and body features intact. You only need to change the cue words to generate a whole set of uniform visual slices with various expression variations and body movements for the anchor.

Core Functions: Accurate Text Layout & High Throughput Rendering (GPT Image 2 & Flux 2 Pro)

If you are dealing with commercial posters or e-commerce advertisements, which have extremely high requirements for text spelling accuracy and output speed, the following two models are recommended.

- Image generation with typeset text (GPT Image 2): Choose the GPT Image 2 model when a specific English or brand name needs to be printed on clothing, lighted signs, or mugs. When entering the prompt, wrap the word you want to generate in English double quotes. For example, “A vintage-textured street photograph with ‘HAPPY HORSE CLUB’ clearly printed on the awning of a cafe in the center of the frame.” The resulting image will render pixel-accurate spelling, virtually eliminating garbage. The engine also supports uploading up to 16 reference images for fusion editing, and you can provide color references and sketch references at the same time to precisely manipulate the image.

- Extremely fast high-volume e-commerce charting (Flux 2 Pro)Flux 2 Pro: After switching to Flux 2 Pro, you only need to configure the environmental cues of the product, and then you can realize the speedy experience of “rendering a 1K HD clip every 10 seconds”. You can utilize this speed to make continuous high-frequency clicks to produce hundreds of display posters with completely different light and shadow for the same product in one click, and then quickly select the most satisfactory one to be put on the market, which greatly improves the efficiency of A/B testing.

V. Core Functions Explained: Motion Control & Lip-Sync

- Motion Capture and Migration (Motion Control)Motion Control is a tool that can be used to perfectly reproduce the movements of a real video to a still person in a photo. Click on the “Motion Control” tool page. You will need to upload two files: a “still image” (which determines who will be in the final video) and a “reference video” containing the movements (which determines the dance or martial arts movements in the final video). After clicking Start, the system will precisely strip the bone movement trajectory in the video and drive the character in the still photo to dance, which is suitable for the rapid production of secondary idol or dance demonstration video.

- Talking Avatars (lip-vocalized digitizers): This is a self-explanatory tool. Select “Lip-Sync” on the function page. First, upload a half-front photo of yourself; then type your prepared text in the input field (the system will use AI to convert it into speech), or directly upload a recorded native MP3 voiceover file. The engine automatically creates a 3D topology based on the activity of the mouth muscles and generates a long video. In the video, the still image not only blinks and swings its head naturally, but also opens and closes its lips in a frame-by-frame, perfect occlusion that matches the pronunciation of your text.

VI. Creation and preservation mechanisms

All features of the platform are designed to work out of the box. When you're satisfied with any of the work you've generated, you can hover over the artwork card and click to download it. All normal exports and native 4K Ultra HD exports do not include the platform's watermark, allowing you to smoothly import your assets directly into Premiere, Cutting Edit, or other design software for the next step. Experiment with combining the strengths of different engines (e.g., drawing with GPT Image 2, feeding Kling 3.0 to convert video, and dubbing with Lip-Sync), and you'll have the productivity of an entire Hollywood production team all by yourself!

application scenario

- Previews of film-quality microfilms and multi-camera sketches

Creators can utilize the Happy Horse model in conjunction with Kling 3.0 to stitch footage together. Simply by typing in script prompts, the platform can generate high-quality video clips with precise environmental sound effects, physical collisions, and even original character dialog in a single step, dramatically shortening the time required for previewing the scene dynamics and polishing the audio track in the post-production phase of a short drama. - Game Digital Assets & Serialized Manga Character Design

Game artists and serial artists can use Nano Banana Pro to upload 4-8 character sketches as a benchmark reference. No matter what extreme actions or complex scene prompts are inputted later, the system can lock the character's facial proportions and body features like hard constraints, easily generating coherent and unified three-views, multi-view slices and expression packs, and saying goodbye to the problem of AI drawing's “change-it-or-change-it” look drift. - E-commerce advertisement display and product poster batch test

Utilizing the Flux 2 Pro engine's superb rendering throughput of generating 1 1K HD image every 10 seconds, e-commerce artists and marketers can instantly produce hundreds of product visual poster variants with different backgrounds and atmospheres. Combined with GPT Image 2's precise graphic layout (correctly generating promotional slogans directly on the screen), click-through rate (CTR) tests for different consumers can be efficiently accomplished. - Self-media narration and virtual digital person newscasts

There's no need to buy expensive facial capture equipment or hire professional actors. Self-media practitioners only need to upload a still photo with a clear face, combined with a dubbed recording file or input text lines, they can use the platform's Lip-Sync function to produce a digital human broadcast video with realistic facial expressions and frame-by-frame lip alignment, which dramatically improves the efficiency of the production of popular knowledge and news commentary videos.

QA

- Do I need to buy a high-end graphics card or download heavy software to use Happy Horse?

Happy Horse is a purely cloud-based online generation workbench. All you need is a web browser and an internet connection to have smooth access to all the top models (e.g. Kling, Veo, GPT Image, etc.). All the arithmetic rendering and tens of billions of parameter processing runs on our cloud server cluster, with no requirements on your local computer or phone configuration. - Do the AI videos generated by the platform come with sound? Or do I have to go to another software for post dubbing?

Native, high-quality sound. The platform's self-developed Happy Horse model adopts an advanced “single-step forward transfer” model architecture, which can understand your prompt words in one step, and at the same time generate high-quality dynamic images and native audio that accurately fit the physical scene (including the bottom noise, the special effect sound of object movements and even the character's dialogues), completely breaking the limitations of traditional AI videos, which are “only images without sound”. It completely breaks the limitation of traditional AI video, which is "only picture but no sound". - Why does the same character character I generated in other AI tools look different every time? Can you guys fix it?

It can be solved completely. If you need an exact match of your character's face, switch to the Nano Banana Pro engine in the toolbench. Simply upload four to eight reference photos of the character, and the engine will turn the character's identity into a mandatory rendering constraint. No matter what kind of outfit, viewpoint, or action you generate, the character's identity remains absolutely consistent, and no “look-alike drift” occurs. - Is the generated video or image watermarked? Can it be used for commercial projects?

All audio, video and image files generated and downloaded from the platform do not carry any platform watermarks by default, and the image quality is pure, so you can use them directly in your projects. For commercial use, the original digital content and assets generated by our underlying engine can be used freely in your commercial advertisements, self-media accounts or game projects. - Generating specific English words on images is always garbled or misspelled, has the platform improved?

There are radical improvements. The GPT Image 2 model built into the platform is specifically optimized for text rendering. You only need to mark the text you want to generate (e.g. “Happy Horse”) with double quotes in the prompt word, and the model will be able to correctly spell the phrase in the generated image (e.g. neon signs, coffee cups, posters) with an accuracy of up to 99%, supporting Latin, Chinese, and other characters, basically bidding goodbye to the AI word garble phenomenon.