Octopus is a simple, beautiful and efficient Large Language Model (LLM) API aggregation and load balancing proxy service tool designed for individual users and developers. With the proliferation of LLMs on the market, the challenge is how to efficiently manage APIs and multiple keys from various vendors. Octopus provides a one-stop solution to centralize LLM APIs from multiple channels into a unified local management panel for configuration and distribution. Regardless of the underlying interface, whether it is OpenAI, Anthropic, or Gemini Octopus supports seamless protocol conversion for all formats, allowing front-end applications to invoke the whole network model with a unified interface standard. In addition, the platform supports mounting multiple API keys for a single channel, and solves the problems of flow limitation and error reporting in high concurrency scenarios by using various load balancing strategies such as intelligent preference, polling, randomization or failover. With an intuitive visual user interface, Octopus has built-in request statistics, Token consumption monitoring, cost bill tracking, and automatic synchronization of upstream model pricing, which perfectly meets the in-depth management needs of geeky players for multi-model interface calls.

Function List

- Multi-Channel Large Language Model Aggregation: Supports unified management and configuration of API channels from multiple mainstream Large Language Model (LLM) providers in a single system, centralizing and consolidating fragmented interfaces.

- Flexible Multi-Key Distribution MechanismsSupports configuring multiple different API Keys for the same model channel, which will be automatically rotated to effectively avoid request failures due to a single Key triggering Rate Limit.

- High Availability Comprehensive Load BalancingBuilt-in enterprise-class traffic distribution policies, including Round Robin, Random, Failover, and Weighted, ensure stable and smooth flow of all external calls.

- Fully automated cross-platform protocol conversionThe protocol adapted translation is natively supported, enabling adaptive compatibility between interface standards such as OpenAI Chat, OpenAI Responses, Anthropic API, and Gemini, and allowing old clients to seamlessly invoke the new platform's big models.

- Intelligent Endpoint Velocimetry and PreferencesFor channels bound to multiple Endpoints, the system automatically calculates the network request latency of each node and intelligently distributes the traffic to the node with the fastest current response.

- Automated price synchronization with models: Automatically grab the latest model pricing data from the models.dev upstream open source library and synchronize it to the local billing system; meanwhile, it supports automatically refreshing and synchronizing the list of the latest models supported by the current underlying channels.

- Visual statistics and KanbanProvides a minimalistic and data-rich front-end dashboard (Web UI) that records in real-time the network status of each interface request, the exact amount of Token consumption, and the corresponding financial tracking of streaming costs.

- Lightweight and compatible with multiple database enginesThe service architecture is extremely lightweight, with default support for local SQLite for minute-to-minute zero-configuration deployment, and smooth access to MySQL or PostgreSQL for larger persistent log data storage.

Using Help

🚀 Octopus Installation and Detailed Operation Panorama Guide

Octopus is dedicated to providing individual developers and AI enthusiasts with the most efficient and minimalist LLM API management experience. In order to help you get started and realize the full potential of this architecture, this guide will provide you with a set of comprehensive and nanny-level operation instructions from basic system deployment, environment configuration, to in-depth multi-channel aggregation and client access. Please read it carefully and follow the steps to deploy and build your private big model scheduling hub.

📦 I. Service Deployment and Initialization Settings

The Octopus server is compiled as a single executable with embedded optimized web front-end resources, which means that deployment is simplified to the extreme, and you can say goodbye to complex environment configurations.

1. Containerized deployment with Docker (highly recommended)

For most users, Docker provides a pure and easy-to-maintain isolated environment. Make sure Docker is installed on your host or server, and then run the following one-click startup command in a terminal:

docker run -d \

--name octopus \

-v /你的本地绝对路径/data:/app/data \

-p 8080:8080 \

chruxc/octopus

💡 Best Practice Guide: command in the -v parameter is responsible for setting the container's /app/data Directory persistent mount to your local disk. This step is extremely important, as the SQLite database generated by Octopus by default (which records all statistical billing) and the system core config.json Configuration files are stored here. Data persistence prevents the loss of your API Key assets and historical statistics due to container restart or destruction.

If you are an advanced player who is used to using orchestration tools, you can also just create a docker-compose.yml Documentation:

wget https://raw.githubusercontent.com/chicring/octopus/refs/heads/dev/docker-compose.yml

docker compose up -d

2. Binary direct run vs. source code compilation

If you don't have a container environment or want to get it up and running quickly on your personal Windows/macOS computer, you can go directly to the project's GitHub Releases page and download the operating system's binary tarball. Unzip it, and then open a terminal and type ./octopus start You can run the service in seconds.

For geeks who want to participate in secondary development, you need to make sure that you have Go 1.24+ and Node.js 18+ environment installed on your system. After cloning the code repository, first enter the web Catalog Usage pnpm install && pnpm run build Compile the front-end static resources and move them to the back-end static directory, and finally through the go run main.go start Complete the overall project compilation and startup.

3. First-time logins and administrator credential modifications

Regardless of how the service is started, the system will by default listen locally on the 8080 Port. Open any browser to access the http://localhost:8080You can see the refreshing admin backend.

- Default login account:

admin - Default Login Password:

admin

⚠️ Safety Warning: Not only does your admin panel hold the high-value API keys you've paid for, it also exposes the forwarding endpoints to the public. So the first thing you should do when you first enter the console is to go to the system Settings page and change the default password to a strong password structure.

🛠️ II Configuring Channel Aggregation and Intelligent Routing from Scratch

After successfully logging in, the Dashboard will appear. We need to build the service according to the “bottom-up” logic: first configure the underlying provisioning channel, and then configure the external distribution groups.

Step 1: Enter and configure the underlying API channel (Channel Management)

The channel can be loosely understood as your “API provider”.

- In the left navigation bar click on channel managementThen click “Add Channel” in the upper right corner.

- Select Provider Type: The system comes preconfigured with a variety of modeling protocols such as OpenAI Chat, Anthropic, Gemini, and others. Choosing the right type determines how the underlying requests are assembled and parsed.

- Fill in the Base URL: Here's a core design, Octopus has intelligent route completion so youunnecessaryFill in the specific long request path. For example, if you're accessing an official OpenAI site, just fill out the

https://api.openai.com/v1The system automatically splices in a smart splice behind it when it is forwarded./chat/completionsetc. corresponding to the routing address. - Fill the API Key Matrix PoolOctopus allows you to configure multiple API Keys of the same provider under a single channel in different rows: to solve the problem of account blocking or limited concurrency due to high-frequency calls, Octopus allows you to automatically allocate traffic among these Keys when processing a large number of requests.

Step 2: Form Virtual Load Balancing Groups (Group Management)

Once the channels have been configured, the system is not ready to provide services to the public. We need to “package” these fragmented channels into an external virtual model.

- go into Cluster management page, create a new business grouping.

- Define the virtual model name: The “group name” here is the name of the group passed in by the external client when it sends the request.

modelThe field name. For example, name itgpt-4o-pro。 - Bind lower level real channels: Below that, check the specific physical channels that you have just established to be able to provide the gpt-4o dialog service (e.g., a mix of official direct-connect nodes and cheap third-party transit nodes).

- Selection of traffic distribution and routing policies (core highlights):

- Round Robin: Each new API request will request the underlying channels one by one in a set order, ensuring that each node carries equal traffic.

- Random: Completely randomized selection, suitable for scenarios with a huge number of nodes and undifferentiated stability.

- FailoverOctopus is designed for high-stability production environments! You can set up a high-priority “cheap proxy channel” as the primary, and in case of network error, balance depletion, or congestion timeout on that channel, Octopus will automatically block the error return and seamlessly redirect the request in the background to the low-priority, but most stable, “official alternate channel ”The client will not even feel a slow response. Your client will only feel a half-second slowdown in response, and you'll never get an annoying red error message.

- Weighted: According to the price or bill balance of different channels, nodes can be assigned any weight ratio of 1-100, so as to accurately control the traffic splitting of each node.

Step 3: Synchronization of refined billing and model unit prices (Price Management)

Calculating specific money spent in a multi-channel hybrid environment is often a headache, and Octopus has introduced an automated billing system for this purpose:

- By default, Octopus silently connects in the background to GitHub's famous open source model pricing library

models.dev, automatically grabs and updates the official real-time unit prices of all major global models for you. - When you mount some exclusive or non-standard private models in the channel, the system will automatically record these “priceless” models in the panel, and you can manually set up customized ones on this page

Input和OutputUnit Price.

This feature, combined with the monthly/daily statistical reports on the Dashboard, allows developers to pinpoint which application scenarios are consuming the most money.

💻 three, zero code modification: compatible with client access across all platforms

The most powerful advantage of the Octopus architecture is that no matter how many different formats of miscellaneous models you stuff into your panel, when exposed to the outside world, Octopus provides a perfect and standardized OpenAI interface protocol! You can think of it as all-purpose, all-purpose middleware.

Scenario 1: Using the official OpenAI SDK to interface in code

You don't have to do anything to rewrite existing project code, just override it! base_url Parameters:

from openai import OpenAI

# 初始化客户端,将链接指向刚刚部署完成的 Octopus 本地或远程服务点

client = OpenAI(

base_url="http://127.0.0.1:8080/v1",

api_key="sk-octopus-你的系统授权Token"

)

# 重点注意:此处的 model 参数,必须填写在 Octopus "分组管理" 中建立的【虚拟名称】!

completion = client.chat.completions.create(

model="gpt-4o-pro",

messages=[{"role": "user", "content": "请用Python帮我写一个快速排序算法。"}]

)

print(completion.choices[0].message.content)

Scenario 2: Empowering System-Level Command-Line Intelligence (Claude Code as an example)

Some strict official tools require requests to have a native parameter structure. Suppose you want to give the Anthropic formal Claude The Code command-line helper uses an inexpensive relay agent node, and you can modify the local ~/.claude/settings.json Let Octopus act as a “spoofing intermediary”:

{

"env": {

"ANTHROPIC_BASE_URL": "http://127.0.0.1:8080",

"ANTHROPIC_AUTH_TOKEN": "sk-octopus-你的授权Token",

"ANTHROPIC_MODEL": "这里填入Octopus里设定的Sonnet组名",

"ANTHROPIC_DEFAULT_SONNET_MODEL": "这里填入Octopus里设定的Sonnet组名"

}

}

Once the configuration is in effect, the underlying Claude Code calls will be redirected to Octopus, which will automatically do the protocol translation and non-destructive distribution of Anthropic's native requests to the back-end relay agent.

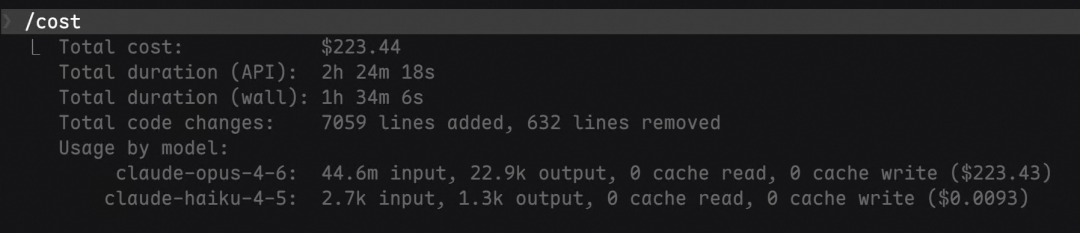

Ultimate Data Loss Prevention Tip: Due to the need to deal with massive text concurrency, in order to guarantee the system read/write limit performance, Octopus will compare each API throughput with the Token Consumption statistics are stored in memory and then written to the SQLite/MySQL database in a uniform batch every few minutes according to a configuration file. So whether you need to update Octopus or are down for maintenance.Remember not to use kill -9 brute force. Please send the SIGTERM signal or directly press the Ctrl+C Smooth closure, the program will automatically drop the last batch of “last train data” in memory safely.

application scenario

- Multi-API Key Quota Pooling and Concurrent Anti-blocking

If you have multiple small-value third-party API keys with limited free credits or strict high-concurrency frequency control, and if the front-end application is directly connected, it is very easy to trigger Rate Limit due to instantaneous high-frequency requests. using Octopus to add these keys to a unified physical channel pool, the system will implement dynamic balanced polling distribution, masking complex scheduling behind a single interface, and completely breaking through the rate blocking bottleneck. bottleneck. - Lossless access to multimodal and cross-brand large model front-ends

For individual developers who often need to interface with multiple models such as ChatGPT, Claude, and Gemini to evaluate them against each other, the private protocol formats of different manufacturers can lead to a bloated code structure on the application side of the request. With Octopus' powerful native protocol conversion gateway, all models are mapped into and out of a standard set of OpenAI interface formats, making them perfectly compatible with any IDE plug-in, Chatbox client, or third-party efficiency tool such as Immersive Translator. - Master-slave node disaster recovery redundancy and high availability failover

For the scenario of limited funds but strong business dependency, developers often purchase a very cheap but unstable third-party transit channel. After applying Octopus, you can set the low-priced channel as the master node and the official high-priced channel as the backup node. Everyday bearers take the cheap channel to reduce costs and increase efficiency, once the main node encounters network congestion and crashes, the gateway layer will realize millisecond-level “Failover” intelligent switching to the official node, and the client's business requests will remain 100% smooth. - Internal application scenarios for granular billing and financial bill tracking

When individuals share large model capabilities across multiple private sub-projects, blogs, endpoint plug-ins, or circles of friends, it's often difficult to spread the load. With Octopus' support for automated synchronization of standard model market unit prices and custom model pricing unit prices, the built-in reporting dashboard provides a deep anatomical record of the exact amount of Token consumed and the corresponding cent price for each request, helping to build a visual and transparent cost of capital dashboard.

QA

- Where is Octopus' configuration information and statistical billing data stored by default? Can I use another database?

For maintenance-free and lightweight principles, the Octopus system defaults to a single-file SQLite database, with data and self-generatedconfig.jsonare placed on the mounted localdata/data.dbCatalog. For advanced users with higher throughput or who want cross-server data isolation, you can modify the configuration file to natively and smoothly connect to a higher-performance MySQL or PostgreSQL database environment. - I accidentally started with a weak password, how do I change the administrator password in the admin panel?

Please use the default initial account after the service has completed its initial deploymentadmin/ PasswordadminLog in to the Back Office. Then click the Personal Center button in the upper right corner of the interface or the Settings button in the lower left corner, and follow the prompts to update and set a new high-strength password immediately to avoid being intercepted by the scanning of the public network machine and thus causing malicious theft of the API assets in the account. - My 3rd party AI app plugin only supports input to the OpenAI format interface, but I want the underlying call to the Anthropic Claude model, will this work?

Totally. Thanks to Octopus“ natively integrated high-performance cross-protocol translator, you only need to correctly enter the address and key parameters of the Claude service in the Channel Manager. All you need to do is to correctly enter the address and key parameters of the Claude service in the ”Channel Management" section, and then fill in the Octopus LAN or public network routing gateway for the externally invoked plugin, and the system will automatically receive the client's standard OpenAI/chat/completionsThe protocol is requested and translated losslessly into Anthropic protocol for conversation at the bottom layer, and the whole process is completely transparent to the caller. - If I inadvertently experience a server power outage or forcibly terminate a system process, will it result in the loss of consumed bill flow records?

There is a possibility of a small amount of data loss. Given the extremely high IO concurrency resources required to respond to statistics requests, Octopus employs a memory-first statistics strategy, where all Request counts and Token billing logs from the last few minutes are stored in a process cache pool and periodically batch-pressed into the underlying database. If thekill -9With this type of forced destruction, data that has not yet been persisted to the database for a few minutes will be zeroed out. Always utilize standard program stop commands (e.g., pressing theCtrl+CWait for Graceful Shutdown to complete. - When filling in the channel base URL, you need to add something like the Postman debugging

/chat/completionsof the request suffix?

No need. The system's request builder is intelligently aware of this, and you only need to fill in the front root path with the domain name and version identifier (e.g.https://api.openai.com/v1、https://api.anthropic.com/v1(etc.). For differentiated endpoint suffixes for model interactions, Octopus automatically assembles and splices them when initiating a proxy request, so do not add to them.