Latiai is an image, video and speech generation platform that integrates multiple mainstream AI models. The platform aggregates industry-leading underlying models such as OpenAI's Sora and GPT Image, Google DeepMind's Veo, Kling from Quick Hand, Seedance and Seedream from ByteDance, Wan from Ali, and Flux and Nano Banana, etc., which can be used in a centralized way without having to register for different platforms. Centralized use. Its core functions include: text-to-image (supporting output of 4K resolution images), text-to-video, image-to-video (supporting up to 15 seconds of duration and simulation of physical laws), multi-character text-to-speech synthesis (supporting 75 languages and emotion control), and AI digital human lip-sync video generation.Latiai aims to help creators, marketers, designers, and other professionals to create and use their own videos through the provision of a unified user interface and diversified model scheduling mechanisms, and to help creators, marketers, and designers to create and use their own videos. Latiai aims to help creators, marketers, designers, and developers to realize the direct transformation from text concepts to high-quality visual and auditory materials, and all content generated is supported for commercial use.

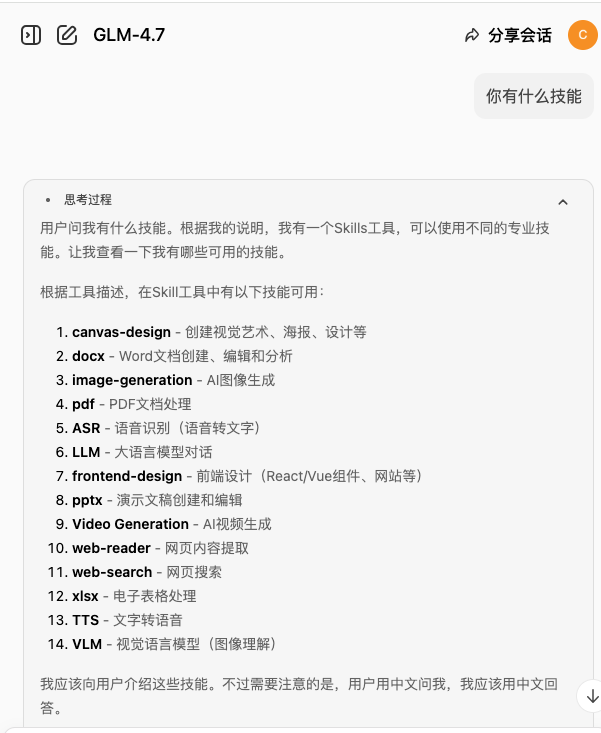

Function List

- Multi-model Image Generation Video: Support transforming static images into dynamic video clips, providing lens control, physical state simulation and character facial animation generation functions.

- Multi-model text-generated video: Aggregate underlying models such as Sora, Veo, Kling, Wan, Seedance, etc. to directly generate 5 to 15 seconds of 1080p or 2K resolution video with native audio synchronization through text descriptions.

- Multi-model text to generate images: Integrate GPT Image, Seedream, Flux, Nano Banana and other image models to support the generation of 4K resolution images without watermarks, to meet the needs of accurate text rendering, photo-realistic, and high-speed batch production of images and other different workflow requirements.

- Multi-character emotional speech synthesis (TTS): 113 built-in AI pronunciation voices and support for 75 languages. It supports assigning independent voices to different characters in a single piece of audio, and precisely controlling the pronunciation tone and emotional performance by inputting emotional tags (e.g. excitement, whisper, laughter, etc.).

- AI digital human video generation: Combined with speech synthesis function, upload static character images and input text/audio, the platform can automatically capture and generate digital human broadcast video with character facial movements and accurate lip-sync.

- Commercial License Output: All images, videos and voice materials generated by the platform provide complete commercial use authorization, which directly meets the commercial publishing needs of enterprises and self media.

Using Help

Latiai is a full-featured, integrated AI audiovisual content generation platform with a web-based, cloud-based operating model. Users do not need to install any software locally, nor do they need to configure a complex computer hardware environment or graphics card requirements, they can simply visit the official website via a modern browser on their computer or mobile to directly access all major AI models. In order to allow new users to quickly get started and take full advantage of the multiple underlying models, the following is a detailed guide to the platform's core functionality and usage process:

I. Platform preparation and infrastructure

- Access & Registration: Visit the Latiai website using a browser and click on the Login/Register button in the upper right corner of the page. After creating an account via email and logging in, the user will be taken to the main workbench (Dashboard).

- Interface Function NavigationThe platform interface is divided into four core modules. In the left navigation bar, you can clearly see the Text to Image, Text/Image to Video Generator, Text to Speech and AI Avatar modules.

Second, the text to generate images (Text to Image) detailed operation process

This module aggregates a variety of top-quality still image models for output posters, illustrations, photographic drawings and more.

- Building Prompts (Prompt): In the text input box in the center of the page, enter a prompt describing the desired image. Please follow the format “subject + environmental background + lighting conditions + camera view + artistic style”, the more specific the description, the more accurate the result.

- Selecting the underlying macromodel: This is a critical step, so choose the right model for your specific needs:

- Need to accurately render text or logos: Selection

GPT Image 1.5或GPT Image 2They are good at generating clear and correct alphabets, poster typography and logos in images. - Pursuing the ultimate in photographic texture and color: Selection

Seedream 4.5或Seedream 5 Lite, for people photography, landscapes and highly expressive artistic drawings. - Need for high speed generation and batch trial and error: Selection

Flux 2 Pro, which is extremely fast out of the box and suitable for rapid iteration in workflows. - Requires high consistency and native 4K sharpness: Selection

Nano Banana 2。

- Need to accurately render text or logos: Selection

- Parameter Configuration and Generation: Select the desired image aspect ratio (e.g. 16:9 for screen, 9:16 for cell phone, 1:1 for avatar) in the setting panel on the right side, and then click “Generate” after confirming that there is no error.

- Getting resultsAfter a few seconds, the generated watermark-free 4K image will be displayed in the history, and you can download it for local use by clicking the “Download” button.

Third, the text / image to generate video (Video Generator) detailed operation process

This module is used to generate dynamic video clips, integrating several of the most powerful current video big models.

- Select the input source type:

- Text to Video: Generate videos by describing scenes, character actions and camera trajectories in text only.

- Image to VideoUpload a clear, local reference image and describe in the input box below what you want the elements in the image to do (e.g., “The water in the image starts to rush and the camera moves forward”).

- Select Video Generation Model:

- Veo 3.1: Ideal for scenarios that require cinematic picture quality and want to come with native audio/video synchronization effects.

- Sora 2: Ideal for generating videos that contain complex physical laws, long camera pans, or narratives up to 15 seconds long.

- Kling 2.6: Suitable for video tasks that require face recognition, facial expression changes, or that require characters to lip-sync.

- Wan 2.6 / Seedance 2: Suitable for the generation of regular motion pictures with high stability motion trajectories.

- Setting output parameters: Select the video quality strategy (Fast Mode for fast results, or Quality Mode for finer rendering). Set the desired length of the video (the system offers 5, 10, and 15 second formats), and set the export resolution (up to 1080p to 2K).

- Generate & Download: Click the Generate button to submit the task. Video rendering consumes a lot of computing power and usually requires a few minutes of waiting time. After the task is completed, you can preview it directly in the web player and click the Download button to get a high-quality video file in MP4 format.

Text to Speech (Text to Speech) detailed operation process

This feature is often used for dubbing generated videos or creating podcasts and audiobooks.

- Enter line text: Enter the text content to be converted to speech in a text editor.

- Selecting and assigning voice roles: The system has 113 built-in pronunciation characters (covering categories such as podcasts, story narrators, game characters, etc.). If it is a dialog, you can select different paragraphs and assign different character voices to them. The system supports automatic recognition of 75 languages by default.

- Add emotion control tags: To break the tedium of mechanical pronunciation, you can control the mood by inserting audio tags. For example, at the beginning of a line type

[excited](EXCITED)[whispering](whispering) or[laughing](laughter), the AI will accurately reproduce the corresponding tone of voice performance when pronouncing the words. - Audition & ExportClick the Preview button to listen to the audio, and then export it to a high-definition audio format (e.g., MP3 or WAV) for post-production editing.

V. Production of video presentations in conjunction with AI digital humans

If you need to produce virtual anchor oral content:

- In the “AI Avatar” module, upload a photo of a positive character.

- Import the voice audio file you just generated (or enter the spoken text directly).

- The platform will utilize a Lip Sync algorithm (Lip Sync) to automatically drive the facial muscles and mouth shape of the person in the picture to generate a digital human video that highly matches the audio. The MP4 file can be downloaded directly and released as a finished product.

application scenario

- Social media short video and self media operation

Short video creators can turn static pictures into dynamic material through the Tugen video function and combine it with the AI emotional voice synthesis system, so that one person can quickly mass-produce daily-shift videos with voiceovers and dynamic images, dramatically compressing the shooting and recording process. - Commercial Advertising and Marketing Materials Production

Marketing teams can utilize image models with accurate text rendering capabilities, such as GPT Image, to generate high-definition posters with accurate promotional text and brand logos directly from text commands. Low-cost product explanations and promotional videos can also be created using the Digital People feature. - Audiobook and podcast content mass production

Audiobook creators and podcast producers can utilize the platform's multi-character speech synthesis system to assign specific voice styles to different characters in a novel or text, and precisely control the tone of the characters' voices (e.g., whispering, excitement, crying) with emotion tags, enabling the production of multi-character radio dramas by a single person. - Game development and preview of movie and TV concepts

Game planners and film directors can use text prompts to invoke multiple generation models to transform abstract story outlines into concrete scene design drawings, character concept drawings, or a few seconds of dynamic sub-scene previews, which greatly improves the team's communication efficiency.

QA

- Can the images and video content generated by the website be used for commercial purposes?

The 4K images and HD videos generated by the Latiai platform through the models are fully licensed for commercial use, and can be legally applied to product packaging, social media cash-outs, commercial advertising materials and other types of commercial projects. - What specific AI models does the platform aggregate for users?

Latiai integrates a number of current mainstream underlying models. Video generation includes Sora, Veo, Kling, Wan, Seedance, etc.; image generation includes GPT Image, Seedream, Flux, Nano Banana, etc. Users can freely switch models according to their needs within one interface. - How to control the emotion and pronunciation tone of the generated AI voice?

In the text-to-speech (TTS) function, the platform provides dozens of audio mood tags such as [excited], [whispering], [laughing], and so on. Users can precisely control the tone and mood of the corresponding sentence by simply adding these markers next to the corresponding line text. - What is the maximum length of a single video that can be generated using AI at a time?

Depending on the parameters of the video model you choose, the platform supports a single generation of motion video between 5 and 15 seconds in length. It also supports up to 1080p and 2K screen resolution output, and can include native audio when generating some videos.