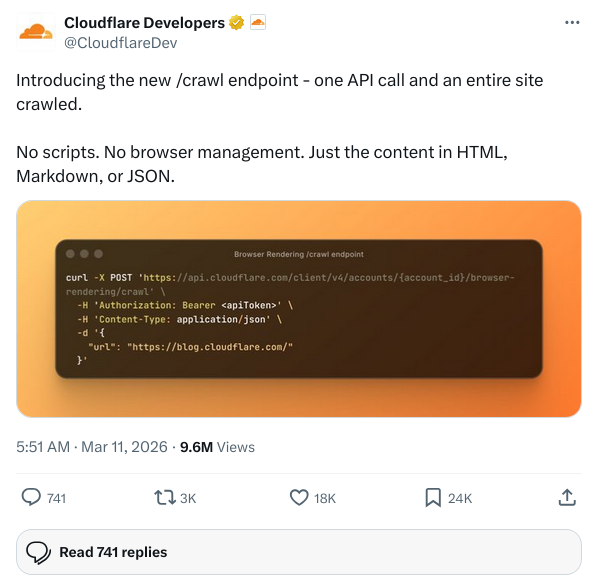

OpenAI gives Codex CLI Added a new command called /goal。

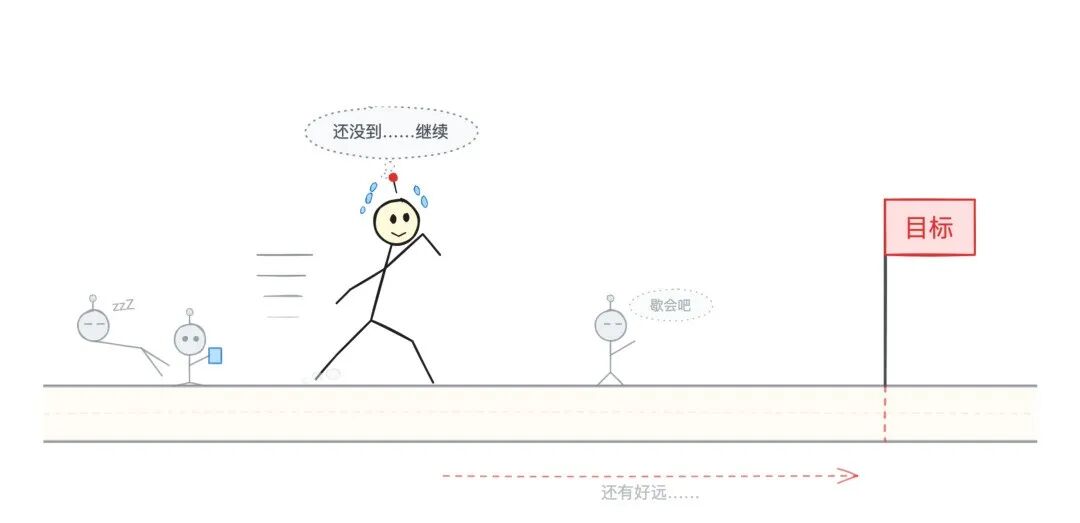

You set a goal for it and it keeps running, doesn't lose context across multiple rounds, and doesn't stop until it gets there.

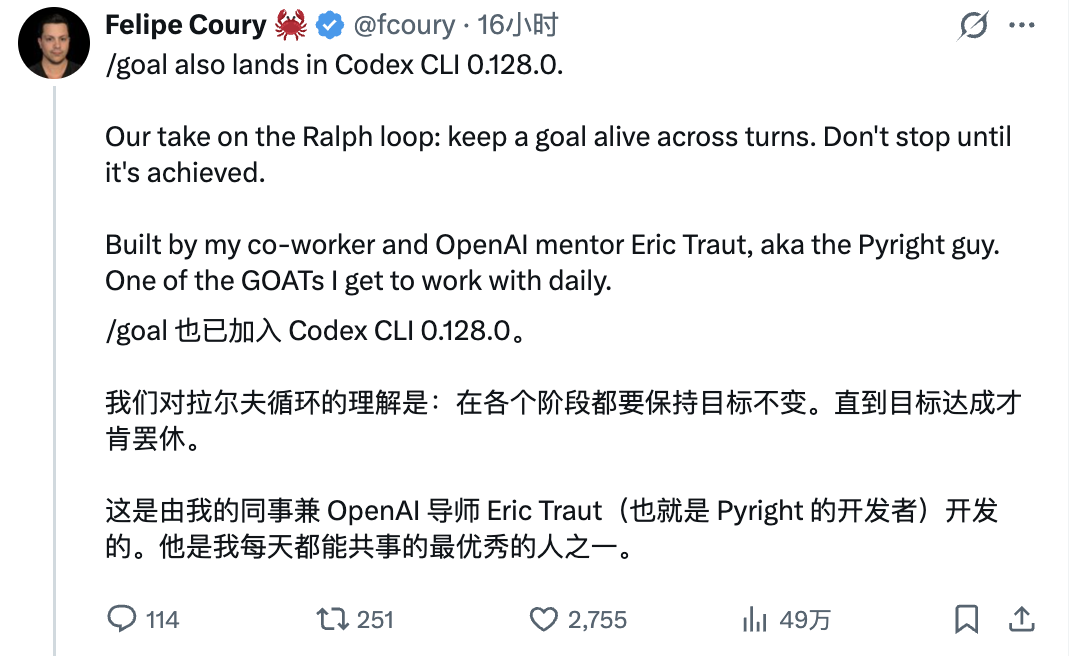

This feature was released with the Codex CLI version 0.128.0 and is still experimental and needs to be turned on manually.This is how Felipe Coury from the Codex team describes it:

“ Keep the goal active for multiple rounds. If you don't reach it, you don't stop.

not give up until one reaches one's goal

not give up until one reaches one's goal

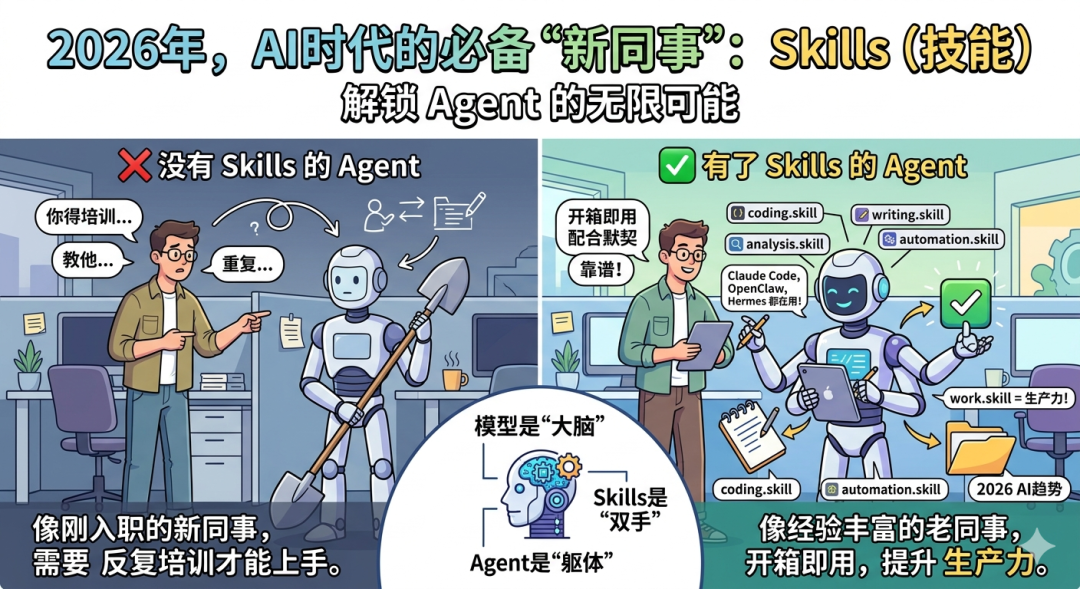

And in my opinion, there is actually a trend hiding behind this feature:In the age of AI, the process is becoming less important; what matters, is the goal.

01

Ralph Loop

realize /goalI've got to talk about Ralph Loop.

The name comes from Ralph Wiggum, the "clueless, persistent, optimistic" boy from The Simpsons. Developer Geoffrey Huntley named an Agent Loop after him: set a goal for the Agent, let it iterate on itself, and when it fails, start over until the goal is reached.

VentureBeat even wrote an article called "How Ralph Wiggum went from the Simpsons to the hottest name in AI".

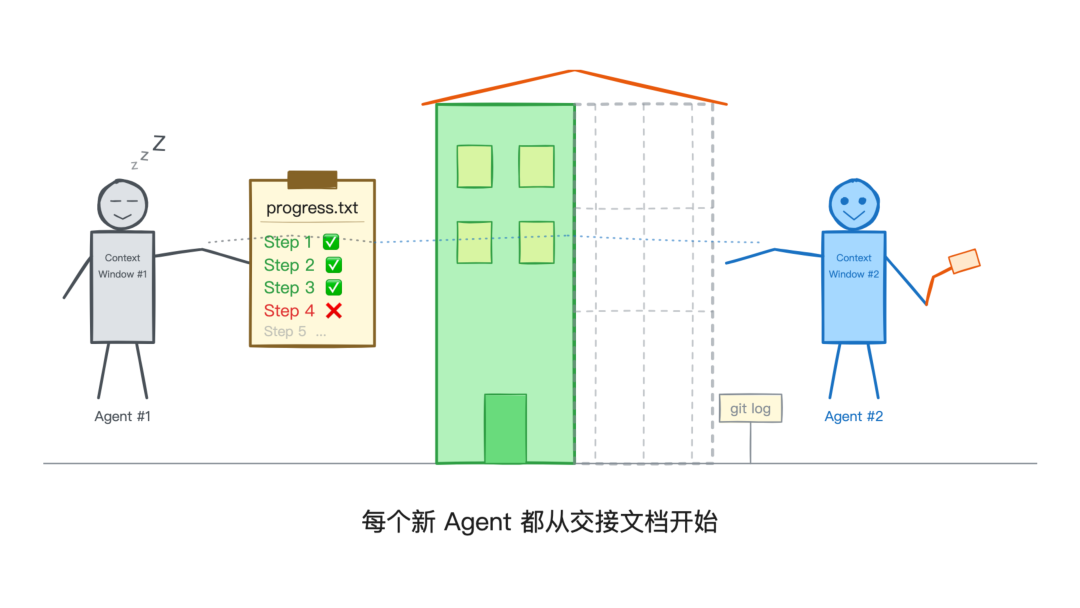

In the community's original implementation, Ralph Loop's approach was a bit more "violent": at the end of each round, the Agent starts a new context window from scratch, relying on git logs and progress files to keep its memory alive.

It is equivalent to handing in a written handover every time you change shifts.

And Codex's /goal , on the other hand, has taken a different route.

It's aContinuous loop within a process, the target stays active across rounds in the same session without starting a new context from scratch.

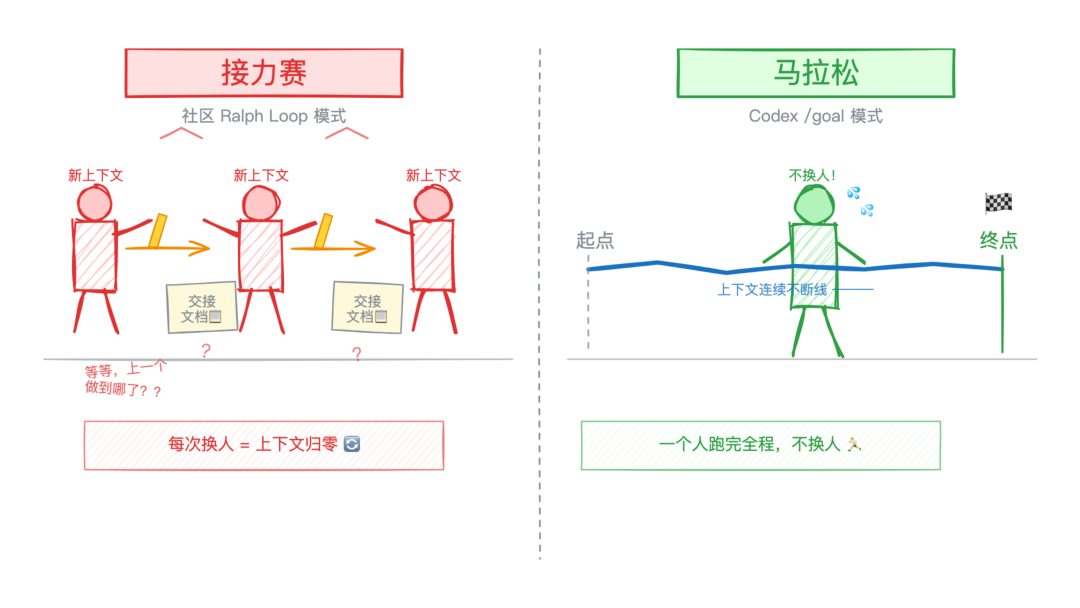

In other words, the community Ralph Loop is like a relay race, with a new person at each baton.

Relay vs Marathon

Relay vs Marathon

And Codex's /goal, more like a marathon runner who runs from start to finish and can pause when he gets tired, but doesn't change.

02

How to use

Usage is simple.

Make sure that the Codex CLI version is above 0.128.0, and then in the configuration file ~/.codex/config.toml Add a paragraph:

●●●

[features]

goals =true

└

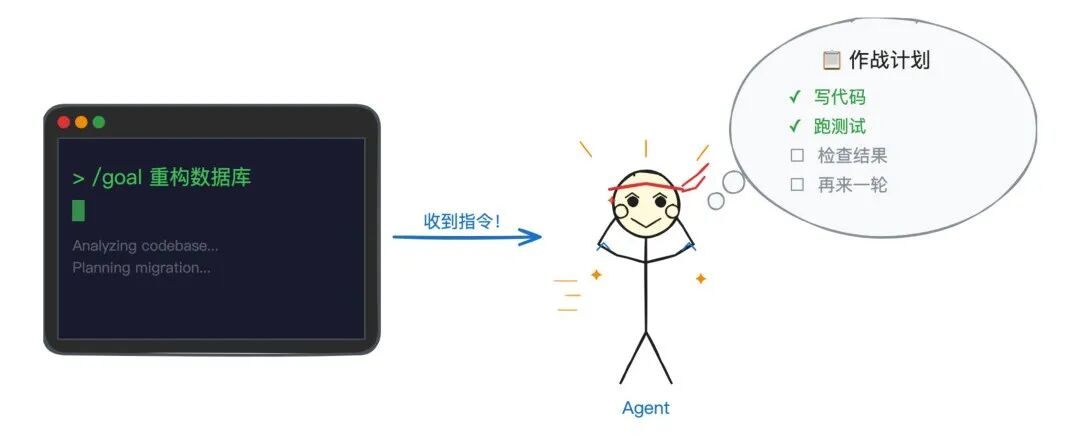

Once opened, enter it in the Codex CLI:

●●●

/goal 重构所有的数据库查询,添加连接池

└

Codex will start working around this goal, writing code, running tests, checking results, and if the goal is not reached after one round, it will automatically start the next round.

Roger that.

Roger that.

In addition, there are several auxiliary commands:

• /goal pause: Suspend the current target

• /goal resume: Resumption of suspended targets

• /goal clear: Clearance targets

If you press Ctrl+C to interrupt, the target is automatically paused and will resume automatically the next time you resume the thread.

A useful tip is that if your goal description is too long, writing it directly in the command may be wrong. You can write the detailed instructions in a .md file, and then use the /goal follow instructions.md to perform.

This is a technique I've recommended over and over again a long time ago, and putting it in a file has the added benefit of not being compressed by the context and losing detail.

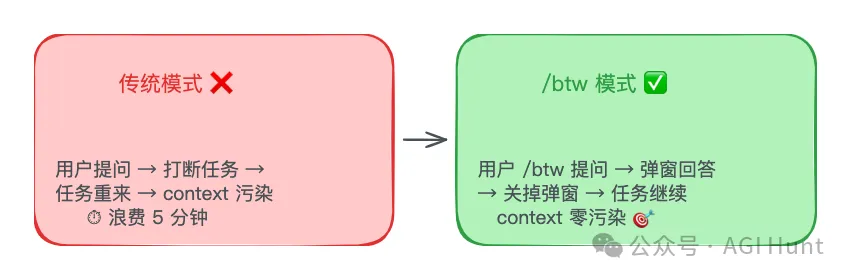

This version also comes with a /side command, you can temporarily open a branch session to ask questions without interrupting the mainline target. When you're done, press Esc to return to the mainline, and the branch session is discarded. These two commands work together quite well.

But ...... this should be to avoid suspicion of plagiarism Claude Code, or just call it /btw, see: Claude Code adds /btw feature

03

It won't stop, but it's not stupid.

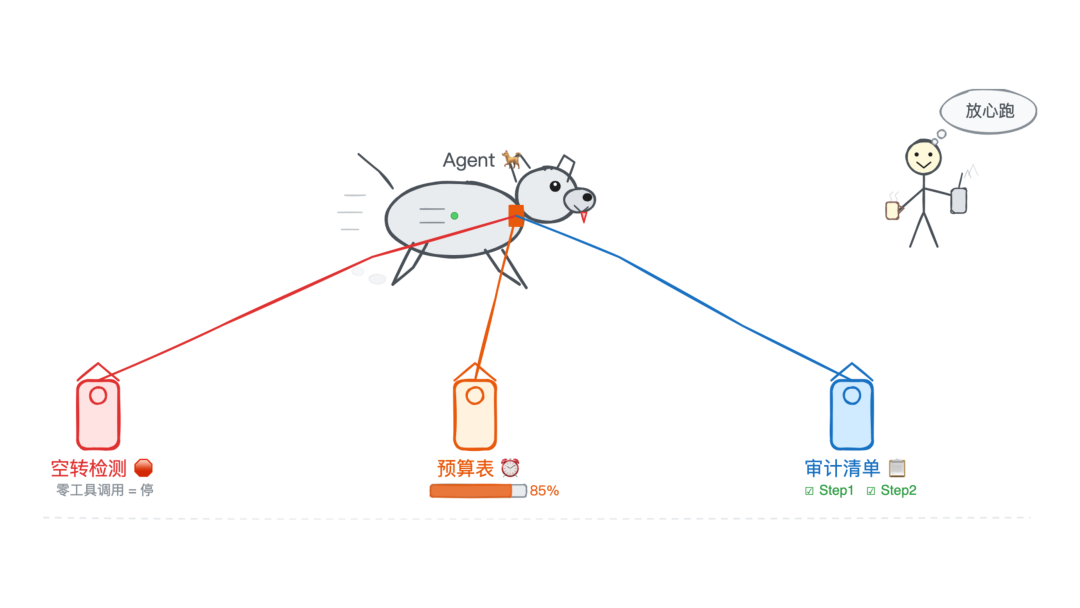

The biggest concern with an Agent that auto-loops is: will it empty over meaningless things?

The Codex implementation includes a set of protection mechanisms.

Zero tool call suppression.If the Agent doesn't call any tools (no code written, no commands run, no files read) during a round of continuation, the system will decide it is "stuck" and automatically stop the loop, and won't re-trigger it until there is new input.

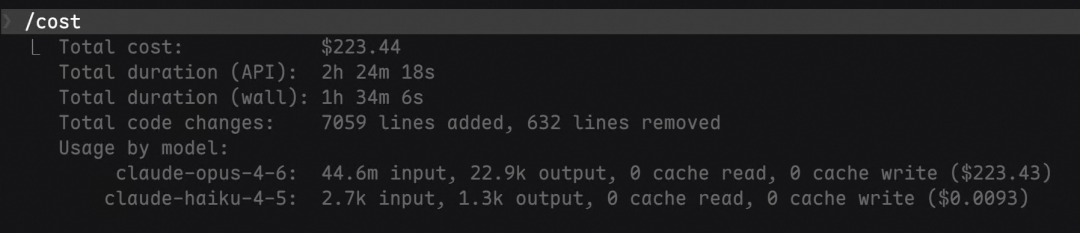

Budgetary control.Each target can be set token Budget and time cap. When consumption exceeds the budget, the system injects a prompt telling the model: don't start a new task, summarize the progress and give the user a clear next step.

Robot dog on three leashes

Robot dog on three leashes

Completion of audit protocols.At the beginning of each run, the system injects a hidden developer command into the model, asking it to perform a set of "completion audits":

1. Breaking down objectives into specific deliverables

2. Build a checklist to map each requirement to actual evidence

3. Examine real documents, outputs, test results

4. "Test passed" does not mean that the goal is accomplished

That is, the system prevents a common problem with models at the mechanism level:Mistaking "I produced something" for "I achieved a goal."。

Just because a test passes doesn't mean the function is complete, and just because the code is written doesn't mean the requirements are met. This "proxy-evidence acceptance" is one of the most insidious failure modes in the Agent loop.

04

source code analysis

Codex is open source, so it's straightforward to see how it's implemented. I updated the code and had the AI flip it for me.

The core logic of the function is in the codex-rs/core/src/goals.rs In the case of Rust, it's about 1,570 lines of Rust code.

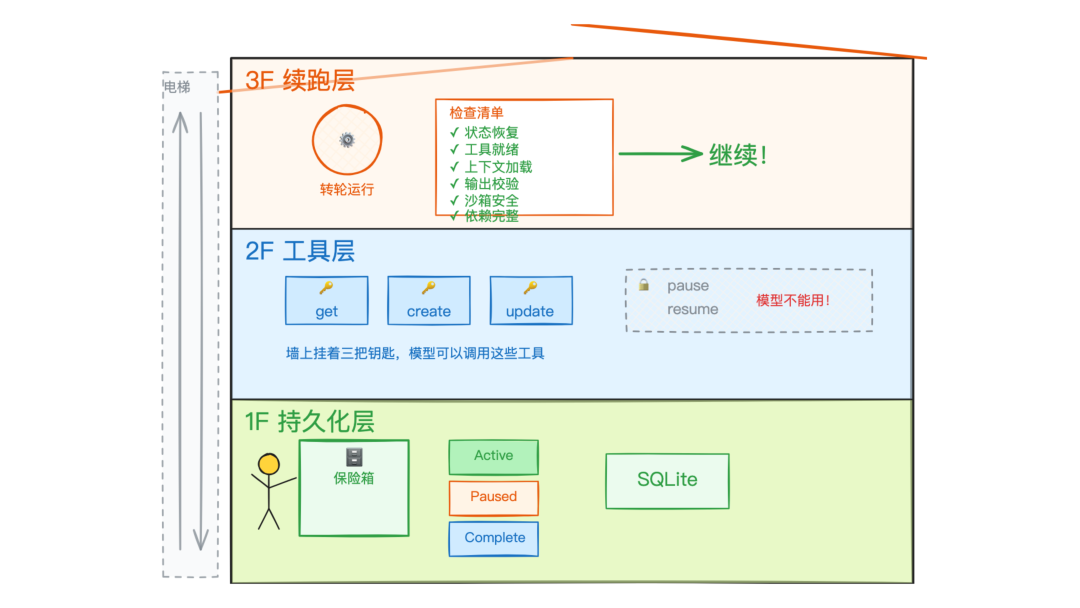

The whole system has three layers:

Persistent Layer: The state of the target exists in the SQLite database and is not lost when the process is restarted or the thread is resumed. Targets have four states: Active, Paused, BudgetLimited, and Complete.

Tool Layer: The system exposes three tools to the model:get_goal(read current target),create_goal(Creation of objectives),update_goal(update target status).

three layer architecture (OSI)

three layer architecture (OSI)

There's a key design decision here:The model can only mark a goal as 'complete', it cannot be paused or resumed. Pausing and resuming is something only the user can do.

The logic in the source code is:

●●●

if args.status != ThreadGoalStatus::Complete {

return Err(FunctionCallError::RespondToModel(

"update_goal can only mark the existing goal complete"

));

}

└

Why is it designed that way?

This is to prevent the model from getting 'lazy' on its own and giving itself a pause when it feels like it's almost done (isn't that an experience?). .

From there, after you set a goal, the model either accomplishes it or you come and call it quits.

There is no third way.

Continuing to run layers: This is the most central part. At the end of each round, the system performs a chain of checks:

1. Whether the targeting function is enabled or not

2. Availability of currently active targets

3. Whether other rounds are being run

4. Pending message queues

5. Whether the continuation of the run was inhibited (zero tool calls in the previous round)

6. Whether currently in Plan mode (targets are ignored in Plan mode)

After all passes, the system injects a developer message containing a description of the objective, budget utilization, and the set of protocols for completing the audit. Then a new round begins.

There's one more little detail:The token calculation only counts non-cached input tokens plus output tokens. Cache hits do not count towards the budget. That is, the budget tracks 'added workload', and there is no charge for rereading existing context.

The developer of this feature is Eric Traut, the author of Pyright, the Python type checker, whom Felipe Coury calls "one of the GOATs you get to work with every day".

05

experimental function

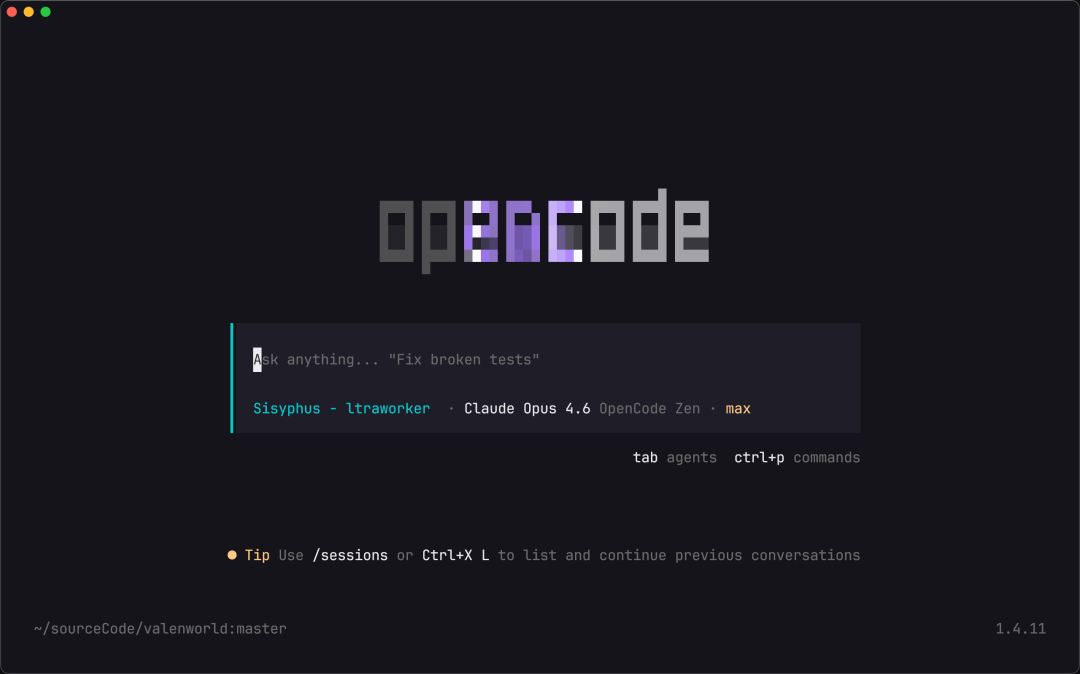

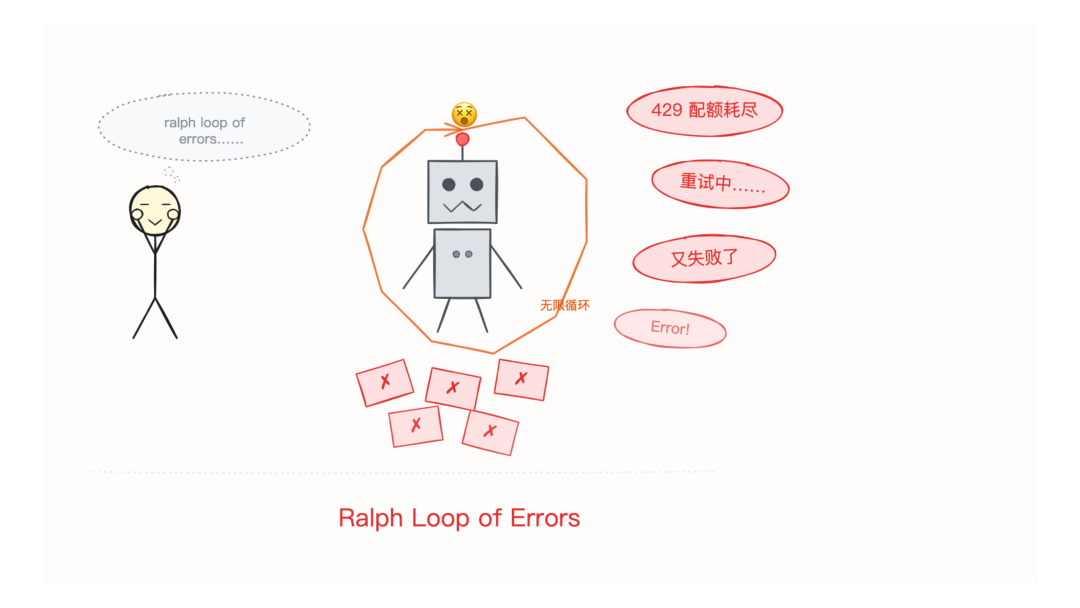

Of course./goal After all, it's still an experimental feature and has some limitations at the moment.

Currently it's only available in the CLI, the Codex desktop app doesn't have it yet.

Also, if the API quota runs out./goal It can get into an awkward loop BUG: keep sending requests, keep getting quota exhaustion errors, and keep retrying ...... Some people call it a 'ralph loop of errors'.

In Plan mode, the target system is automatically ignored. That is, you can't plan and set goals at the same time; the two modes are mutually exclusive.

That's understandable, the goal is to set good goals, a bit strange ......

However, the current goal completion judgment still has the problem of "premature closure". Sometimes the model will consider a goal complete just because it produces an artifact, even if it has only completed the surface work.

06

Most importantly, the goal.

/goal The amount of code is small, and the concept is not complex. But it represents a direction that I think is probably more meaningful than most 'major updates'.

Because it's not the boundaries of AI's capabilities that it changes, it's theCollaborative interface between people and AI。

From "you say one thing and I'll do another" to "you set the goal and I'll be responsible for the whole process".

From 'conversation', it has become 'commissioning'.

The shift in end-as-beginning mindset behind this is more worth thinking about than the function itself.

I'm also basically less involved in the hands-on coding process in my own programming now. I spend more time and energy on: what exactly am I trying to accomplish? How do I verify that this goal is actually accomplished?

How do you set the right goals, how do you figure out exactly what you want to do thatThis is actually the more testing and important judgment and work.

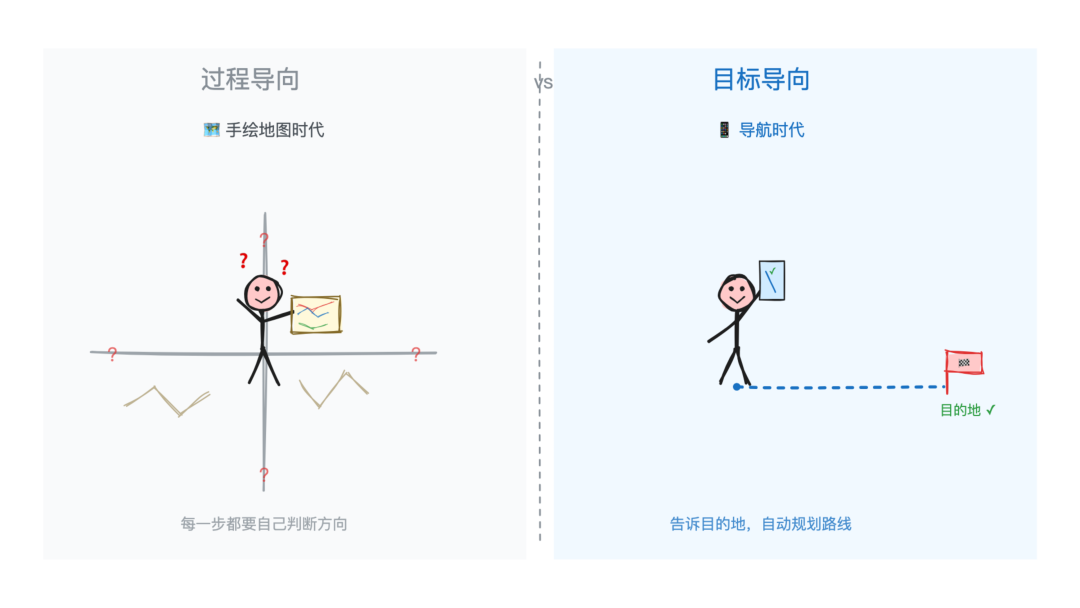

In the past, we were used to a 'process-oriented' way of working: planning steps first, then executing them step by step, with people watching every step.

But AI Agent is flipping that logic on its head:You only need to define the end point. The path is the Agent's own.

The difference between this and traditional programming is like the difference between a navigation app and a hand-drawn map. In the hand-drawn map era, you had to plan your own route and memorize every intersection. With navigation, you only need to enter the destination, as for which way to go, how to avoid traffic, where to turn right, that's the matter of navigation.

Process Oriented vs Goal Oriented

Process Oriented vs Goal Oriented

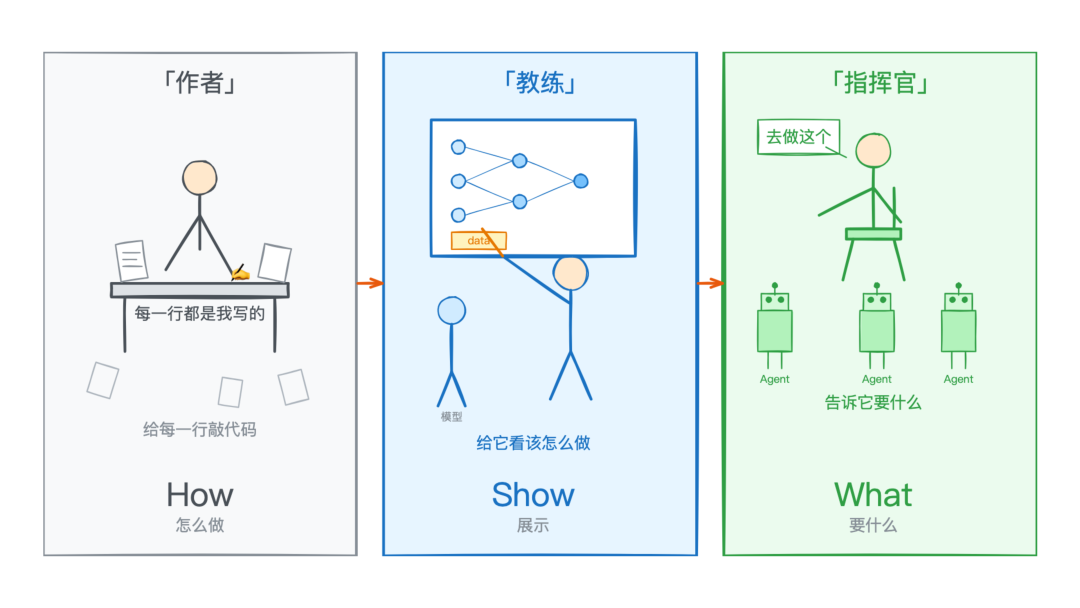

And Karpathy expressed a similar judgment in his recent AI Ascent talk. He summarized the trend as Software 3.0 (see previous post:Software 3.0 is here!):

“ While traditional software automates what you can specify, AI automates what you can verify.

The main thread of the evolution of the three generations of software paradigms is really the change in the way humans are engaged.

From how (tell the machine what to do), to show (show the machine what to do), to what (tell the machine what you want).

Author → Coach → Commander

Author → Coach → Commander

/goal feature, which is sort of the latest footnote on this thread.

You define the goal, which is the verifiable end state. the Agent finds the path itself. You validate the result, it takes care of the process.

When Agent begins to manage its own progress and can actually reach the goals it sets, there is only one thing left for humans to do well:

Figure out what you really want.

◇ ◆ ◇

- Codex CLI open source repository: https://github.com/openai/codex

- Original post by Felipe Coury: https://x.com/fcoury/status/2049917871799636201

- Ralph Loop Community Realization: https://github.com/Th0rgal/open-ralph-wiggum