Login gratuito do GPT3.5 na API

https://github.com/missuo/FreeGPT35

https://github.com/aurora-develop/aurora

https://github.com/Dalufishe/freegptjs

https://github.com/PawanOsman/ChatGPT

https://github.com/nashsu/FreeAskInternet

https://github.com/aurorax-neo/free-gpt3.5-2api

https://github.com/aurora-develop/free-gpt3.5-2api

https://github.com/LanQian528/chat2api

https://github.com/k0baya/FreeGPT35-Glitch

https://github.com/cliouo/FreeGPT35-Vercel

https://github.com/hominsu/freegpt35

https://github.com/xsigoking/chatgpt-free-api

https://github.com/skzhengkai/free-chatgpt-api

https://github.com/aurora-develop/aurora-glitch (使用glitch资源)

https://github.com/fatwang2/coze2openai(COZE转API,GPT4)

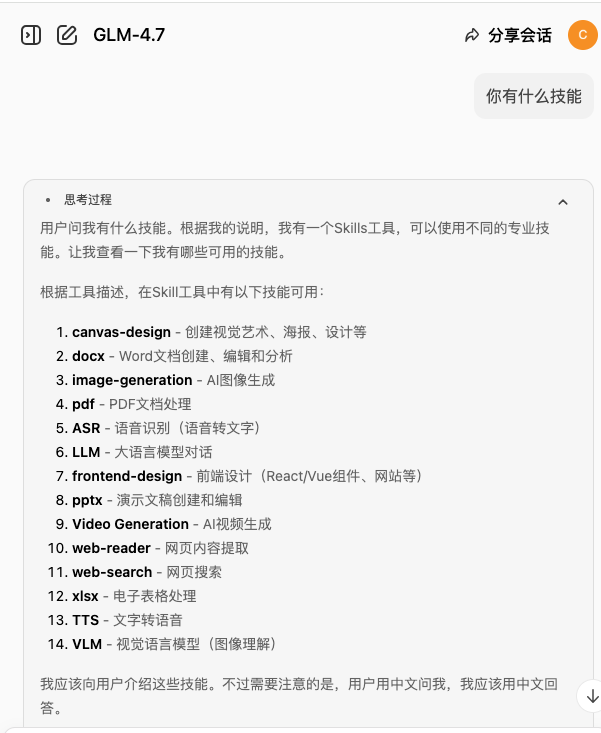

Modelo doméstico Reverso

pesquisa em profundidade(DeepSeek) Interface para API deepseek-free-api

Interface do Moonshot AI (Kimi.ai) com a API kimi-free-api

estrelas saltadoras (Leapfrog Ask StepChat) Interface para API step-free-api

Ali Tongyi (Qwen) Interface para API qwen-free-api

ZhipuAI (discurso intelectualmente estimulante) Interface para API glm-free-api

Meta AI (metaso) Interface para API metaso-free-api

Byte Jump (Beanbag) Interface para API doubao-free-api

Byte Jump (ou seja, Dream AI) Interface para API jimeng-free-api

Interface Spark para API spark-free-api

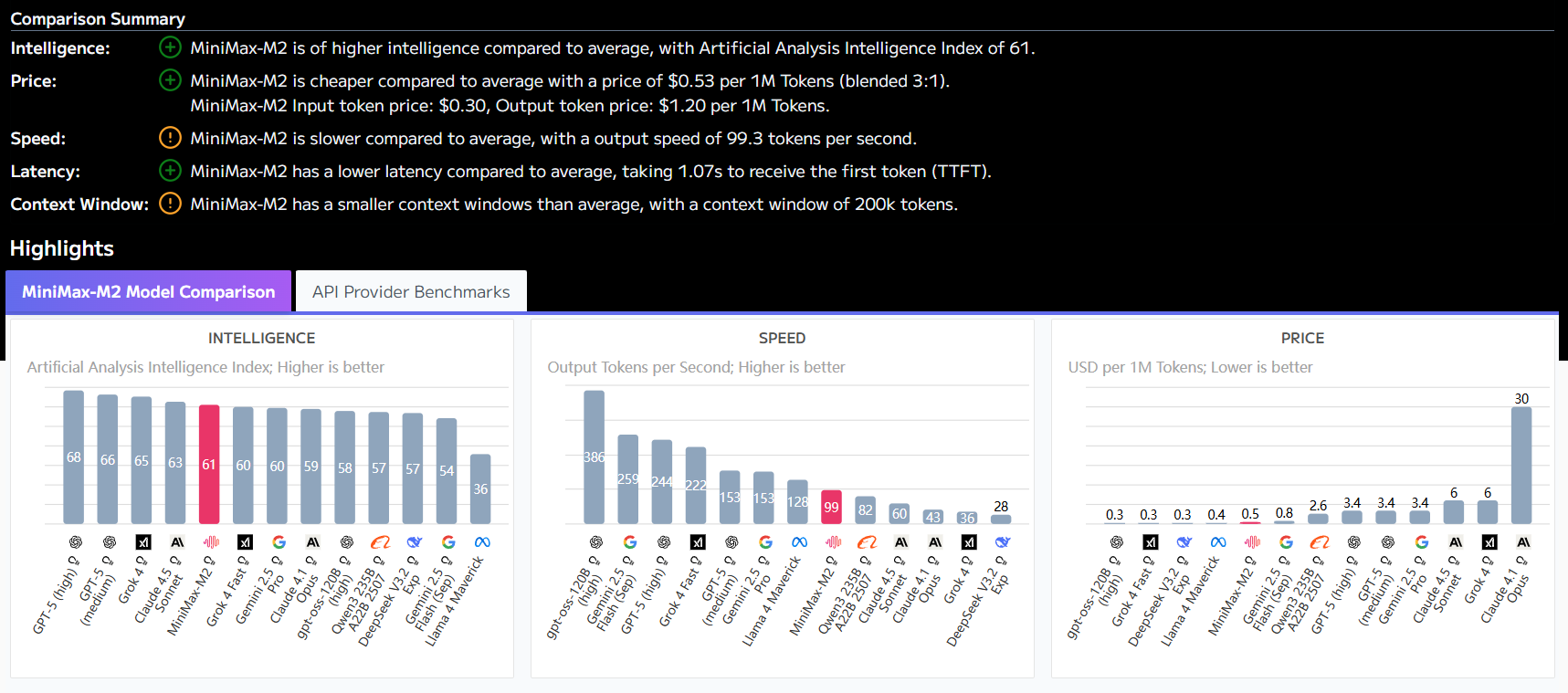

Interface MiniMax (Conch AI) para API hailuo-free-api

Interface Emohaa para API emohaa-free-api

Programa sem login com interface de bate-papo

https://github.com/Mylinde/FreeGPT

O código de trabalho para cloudflare, amarre seu próprio nome de domínio para jogar:

addEventListener(“fetch”, event => {

event.respondWith(handleRequest(event.request))

})async function handleRequest(request) {

// 确保请求是 POST 请求,并且路径正确

if (request.method === “POST” && new URL(request.url).pathname === “/v1/chat/completions”) {

const url = 'https://multillm.ai-pro.org/api/openai-completion'; // endereço da API de destino

const headers = new Headers(request.headers);// 添加或修改需要的 headers

headers.set(‘Content-Type’, ‘application/json’);// 获取请求的 body 并解析 JSON

const requestBody = await request.json();

const stream = requestBody.stream; // obtém o argumento stream// 构造新的请求

const newRequest = new Request(url, {

method: ‘POST’,

headers: headers,

body: JSON.stringify(requestBody) // use o corpo modificado

});try {

// 向目标 API 发送请求

const response = await fetch(newRequest);// 根据 stream 参数确定响应类型

if (stream) {

// 处理流式响应

const { readable, writable } = new TransformStream();

response.body.pipeTo(writable);

return new Response(readable, {

headers: response.headers

});

} else {

// 正常返回响应

return new Response(response.body, {

status: response.status,

headers: response.headers

});

}

} catch (e) {

// 如果请求失败,返回错误信息

return new Response(JSON.stringify({ error: ‘Unable to reach the backend API’ }), { status: 502 });

}

} else {

// 如果请求方法不是 POST 或路径不正确,返回错误

return new Response(‘Not found’, { status: 404 });

}

}

Exemplo de POST:

curl –location ‘https://ai-pro-free.aivvm.com/v1/chat/completions’ \

–header ‘Content-Type: application/json’ \

–data ‘{

“model”: “gpt-4-turbo”,

“messages”: [

{

"role": "user", "content": "Why Lu Xun hit Zhou Shuren "

}],

“stream”: true

}’

Adicione um código de pseudofluxo (a saída será mais lenta):

addEventListener(“fetch”, event => {

event.respondWith(handleRequest(event.request))

})async function handleRequest(request) {

if (request.method === “OPTIONS”) {

return new Response(null, {

headers: {

‘Access-Control-Allow-Origin’: ‘*’,

“Access-Control-Allow-Headers”: ‘*’

}, status: 204

})

}

// 确保请求是 POST 请求,并且路径正确

if (request.method === “POST” && new URL(request.url).pathname === “/v1/chat/completions”) {

const url = 'https://multillm.ai-pro.org/api/openai-completion'; // endereço da API de destino

const headers = new Headers(request.headers);// 添加或修改需要的 headers

headers.set(‘Content-Type’, ‘application/json’);// 获取请求的 body 并解析 JSON

const requestBody = await request.json();

const stream = requestBody.stream; // obtém o argumento stream// 构造新的请求

const newRequest = new Request(url, {

method: ‘POST’,

headers: headers,

body: JSON.stringify(requestBody) // use o corpo modificado

});try {

// 向目标 API 发送请求

const response = await fetch(newRequest);// 根据 stream 参数确定响应类型

if (stream) {

const originalJson = await response.json(); // leia os dados completos de uma só vez

// 创建一个可读流

const readableStream = new ReadableStream({

start(controller) {

// 发送开始数据

const startData = createDataChunk(originalJson, “start”);

controller.enqueue(new TextEncoder().encode(‘data: ‘ + JSON.stringify(startData) + ‘\n\n’));// 假设根据 originalJson 处理并发送多个数据块

// 例如,模拟分批次发送数据

const content = originalJson.choices[0].message.content; // presume que esse é o conteúdo a ser enviado

const newData = createDataChunk(originalJson, “data”, content);

controller.enqueue(new TextEncoder().encode(‘data: ‘ + JSON.stringify(newData) + ‘\n\n’));// 发送结束数据

const endData = createDataChunk(originalJson, “end”);

controller.enqueue(new TextEncoder().encode(‘data: ‘ + JSON.stringify(endData) + ‘\n\n’));controller.enqueue(new TextEncoder().encode(‘data: [DONE]’));

// 标记流的结束

controller.close();

}

});

return new Response(readableStream, {

headers: {

‘Access-Control-Allow-Origin’: ‘*’,

“Access-Control-Allow-Headers”: ‘*’,

‘Content-Type’: ‘text/event-stream’,

‘Cache-Control’: ‘no-cache’,

‘Connection’: ‘keep-alive’

}

});

} else {

// 正常返回响应

return new Response(response.body, {

status: response.status,

headers: response.headers

});

}

} catch (e) {

// 如果请求失败,返回错误信息

return new Response(JSON.stringify({ error: ‘Unable to reach the backend API’ }), { status: 502 });

}

} else {

// 如果请求方法不是 POST 或路径不正确,返回错误

return new Response(‘Not found’, { status: 404 });

}

}// 根据类型创建不同的数据块

function createDataChunk(json, type, content = {}) {

switch (type) {

case “start”:

return {

id: json.id,

object: “chat.completion.chunk”,

created: json.created,

model: json.model,

choices: [{ delta: {}, index: 0, finish_reason: null }]

};

case “data”:

return {

id: json.id,

object: “chat.completion.chunk”,

created: json.created,

model: json.model,

choices: [{ delta: { content }, index: 0, finish_reason: null }]

};

case “end”:

return {

id: json.id,

object: “chat.completion.chunk”,

created: json.created,

model: json.model,

choices: [{ delta: {}, index: 0, finish_reason: ‘stop’ }]

};

default:

return {};

}

}