xAI officially launched in January, 2026 Grok Imagine API, a production-grade multimodal video generation service for developers and enterprises. Built on xAI's internally developed “Aurora” model, the service's core capability is the ability to generate video content with high-fidelity synchronized audio based on text prompts or still images. Compared with other video generation models on the market (such as Google Veo or OpenAI Sora), the Grok Imagine API focuses on “speed” and “cost-effectiveness”, aiming to solve the high latency of traditional AI video generation, The Grok Imagine API focuses on "speed" and "cost-effectiveness" compared to OpenAI Sora. The API supports the generation of complex scenes from simple text descriptions, as well as the conversion of static images into dynamic video (Image-to-Video), and has native audio generation capabilities, meaning that the generated video will automatically include background music or dialog synchronized with the action of the screen, without the need for additional dubbing. The system is designed to be fully compatible with the OpenAI SDK, allowing developers to integrate it into existing applications with very low learning costs.

Function List

- Text-to-Video: Generate short video clips with coherent actions and logic directly from natural language descriptions.

- Image-to-VideoSupport for uploading a static image as a reference anchor to generate a dynamic video that maintains the consistency of the original image composition and characters, especially suitable for making product images or characterization images “move”.

- Native audio and video synchronization: The model generates the audio track at the same time as the video pixels, ensuring that the sound (e.g. footsteps, speech, ambient sounds) is precisely synchronized with the on-screen action.

- Video editing and redrawing: Provides a video modification feature that allows the user to change specific elements in the video (e.g., change object color, environment style) through cue words while retaining the overall action structure.

- Extreme Generation Mode: A low-latency inference engine optimized for production environments that supports concurrent processing, dramatically reducing the wait time from cue word input to video rendering completion.

- OpenAI SDK Compatible: The API interface is designed to follow industry standards and supports direct calls using existing OpenAI client libraries, with only the Base URL and API Key modified.

Using Help

The Grok Imagine API was designed with “seamless integration” in mind. For developers familiar with Python and RESTful APIs, getting started is intuitive. Since xAI maintains a high level of compatibility with the OpenAI SDK, you don't even need to install the specialized xAI library.

1. Preparatory work

Before using the API, you need to complete the following basic setup:

- Register for an account: Visit the official xAI developer console (console.x.ai) and register for an account.

- Top up credit lineThis API is a paid service due to the high arithmetic consumption of video generation. You need to bind a payment method and pre-charge (Credits).

- Get API Key: Click “Create API Key” on the “API Keys” page of the console and copy the generated key (in the form of a

xai-(at the beginning). Please save it properly as it will only be displayed once.

2. Environmental configuration

Make sure you have Python installed in your development environment as well as the openai Official Library.

pip install openai

3. Code integration examples

Here's a standard process for generating a video using Python to call the Grok Imagine API.

Step 1: Initialize the client

Create a Python file (e.g. generate_video.py), configure the xAI access point.

import os

from openai import OpenAI

# 初始化客户端,指向 xAI 的 API 地址

client = OpenAI(

api_key="你的_xai_api_key", # 建议从环境变量获取 os.getenv("XAI_API_KEY")

base_url="https://api.x.ai/v1"

)

Step 2: Build the request

Although xAI is compatible with the OpenAI library, video generation usually uses specific model parameters. Suppose xAI names its video model grok-imagine-v1(Please refer to the official documentation for the latest list of specific model names).

Note: For video generation, instead of streaming the output like a text dialog, you usually submit the task and wait for the result or return the video URL directly.

try:

print("正在发送视频生成请求...")

# 注意:具体端点可能根据 SDK 版本略有不同,

# xAI 通常复用 chat 或 images 接口结构,或者提供专门的扩展参数。

# 这里演示最通用的调用逻辑。

response = client.images.generate(

model="grok-imagine-v1", # 指定 Grok Imagine 模型

prompt="一只赛博朋克风格的猫在霓虹灯闪烁的雨夜街道上奔跑,电影质感,4k分辨率",

size="1280x720", # 设置视频分辨率

quality="standard",

n=1 # 生成数量

)

# 获取返回的视频 URL

video_url = response.data[0].url

print(f"视频生成成功!下载链接: {video_url}")

except Exception as e:

print(f"请求发生错误: {e}")

4. Advanced features: Image-to-Video

If you have a ready-made image that you want to animate, you can pass the reference image via a URL. This usually requires embedding a link to the image in the Prompt, or using an interface method that supports multimodal input.

# 伪代码示例:基于图片生成视频

# 实际参数需参照 console.x.ai 文档中的 "Vision" 或 "Imagine" 部分

response = client.chat.completions.create(

model="grok-imagine-v1",

messages=[

{

"role": "user",

"content": [

{"type": "text", "text": "让画面中的水流流动起来,保持背景静止"},

{

"type": "image_url",

"image_url": {

"url": "https://example.com/your-static-image.jpg"

}

}

]

}

]

)

# 解析返回内容获取视频链接

print(response.choices[0].message.content)

5. Best practices and considerations

- Prompt Technique: Grok Imagine follows instructions to a high degree. The more specific the description (including light and shadow, camera movement, sound ambience), the better the generation. For example, explicitly adding “accompanied by the sound of rain and distant thunder” triggers the audio generation function.

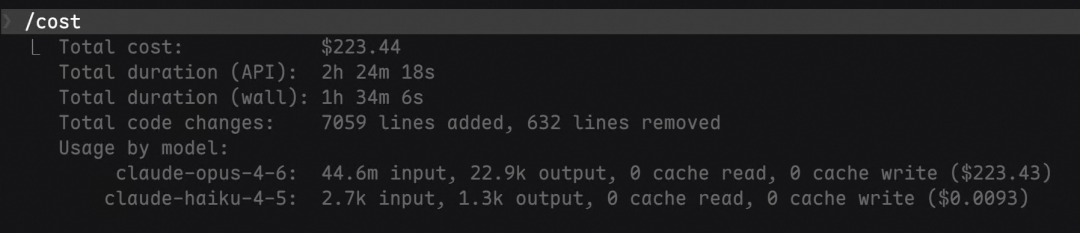

- cost control: Video generation is more expensive than text. It is recommended to use a shorter duration (e.g. 5 seconds) and standard resolution for debugging during the testing phase to confirm the effect of the Prompt before generating a long HD video.

- asynchronous processing: For commercial applications, it is recommended to put API calls into a background task queue (e.g., Celery), as video rendering can take seconds to tens of seconds, to avoid blocking the front-end UI.

application scenario

- Social Media Marketing

Brands can quickly turn static product posters into dynamic advertising videos. For example, a coffee shop can take a static photo of a coffee pull and generate a short video of steaming hot, flowing coffee liquid through the API, and automatically match it with the noisy and cozy background sound of the store, and post it directly on Instagram or TikTok to attract traffic. - Pre-viz

Movie directors or advertising creative directors can use the API to quickly turn script text into dynamic split-screen videos during the ideation stage. This allows team members to visualize the camera scheduling and atmosphere of the scene without the need for costly live-action testing, dramatically increasing the efficiency of pre-production. - Educational and popular science content production

Educators can turn complex historical scenes or descriptions of scientific phenomena into videos. For example, by typing in “the scene of the gladiator games at the Colosseum in ancient Rome”, a restored video with the sound of cheering spectators will be generated, allowing students to understand the teaching content in an immersive manner, and enhancing the interactivity and attractiveness of the courseware.

/n

QA

- Is the Grok Imagine API free?

No. The Grok Imagine API is primarily pay-as-you-go, although xAI may offer a small initial trial amount. Pricing is typically based on the length, resolution, and number of calls to the generated video, as detailed on the Billing page of the xAI console. - Does the generated video contain sound?

Yes, it is. This is one of the core features of Grok Imagine. The model uses “native audio” technology, which not only generates images, but also understands the content of the images and synthesizes matching sound effects (e.g., footsteps, wind) or even simple dialog without the need for the user to find a separate soundtrack. - How long videos does it support to generate?

The initial version typically supports the generation of high-quality clips of about 5 to 10 seconds. This is to ensure consistency of generation and stability of physical logic. For longer videos, developers often use a “segmentation and splicing” strategy. - Can I use the generated videos for commercial purposes?

In general, paid API users have the commercial right to use the generated content, but must comply with xAI's Terms of Service, which prohibit the generation of offending content such as violence, pornography, or false political information.