Background

When integrating multi-LLM models using the Sage framework, token consumption is an important cost consideration. Especially in enterprise applications, a large number of frequent task processing may result in significant API call costs.

Core optimization measures

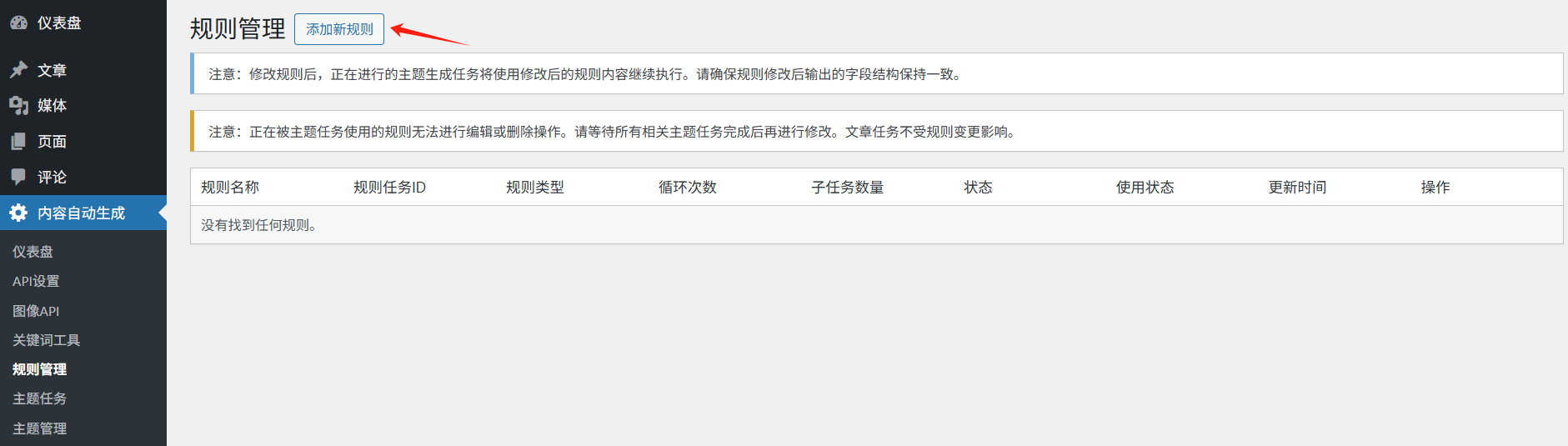

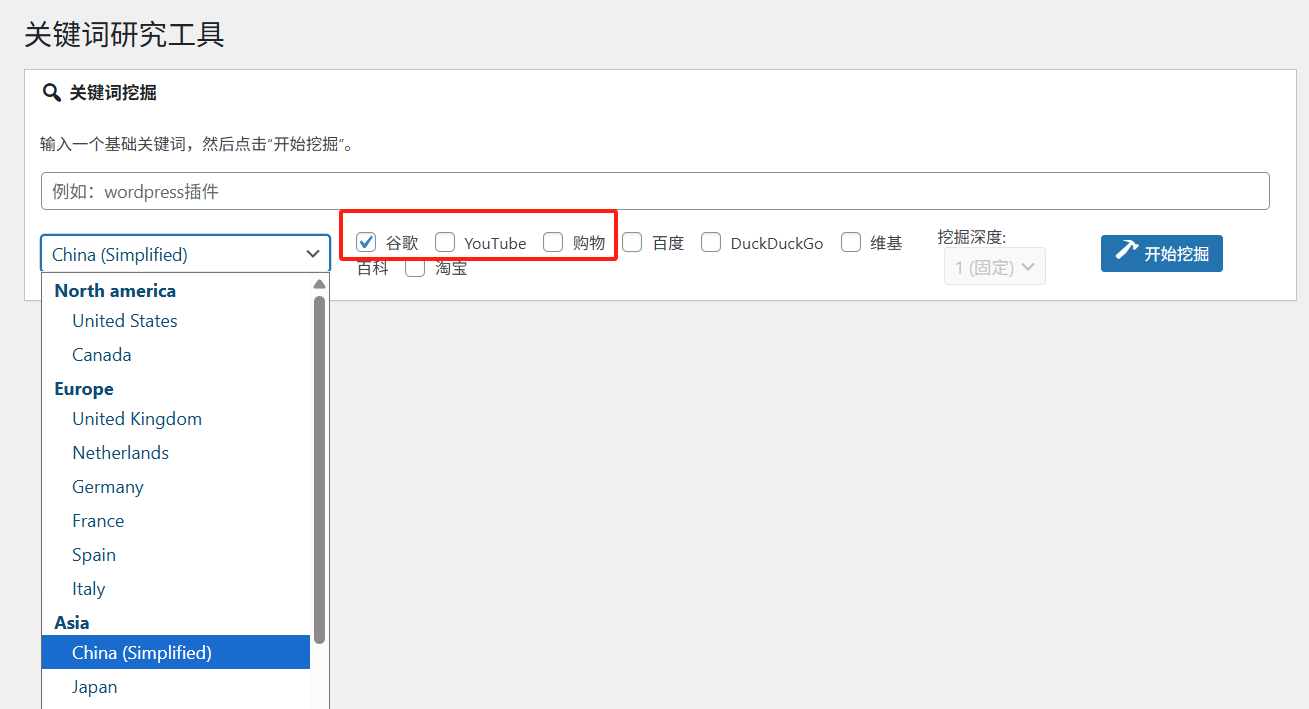

- Enabling Token Monitoring: Sage provides a real-time token statistics panel to view input, output and cached token usage.

- message compression technique: The system has a built-in message compression algorithm that reduces token consumption from 30%-70%.

- Debug Mode Log Analysis: Get detailed logs by setting SAGE_DEBUG=true to analyze token consumption hotspots.

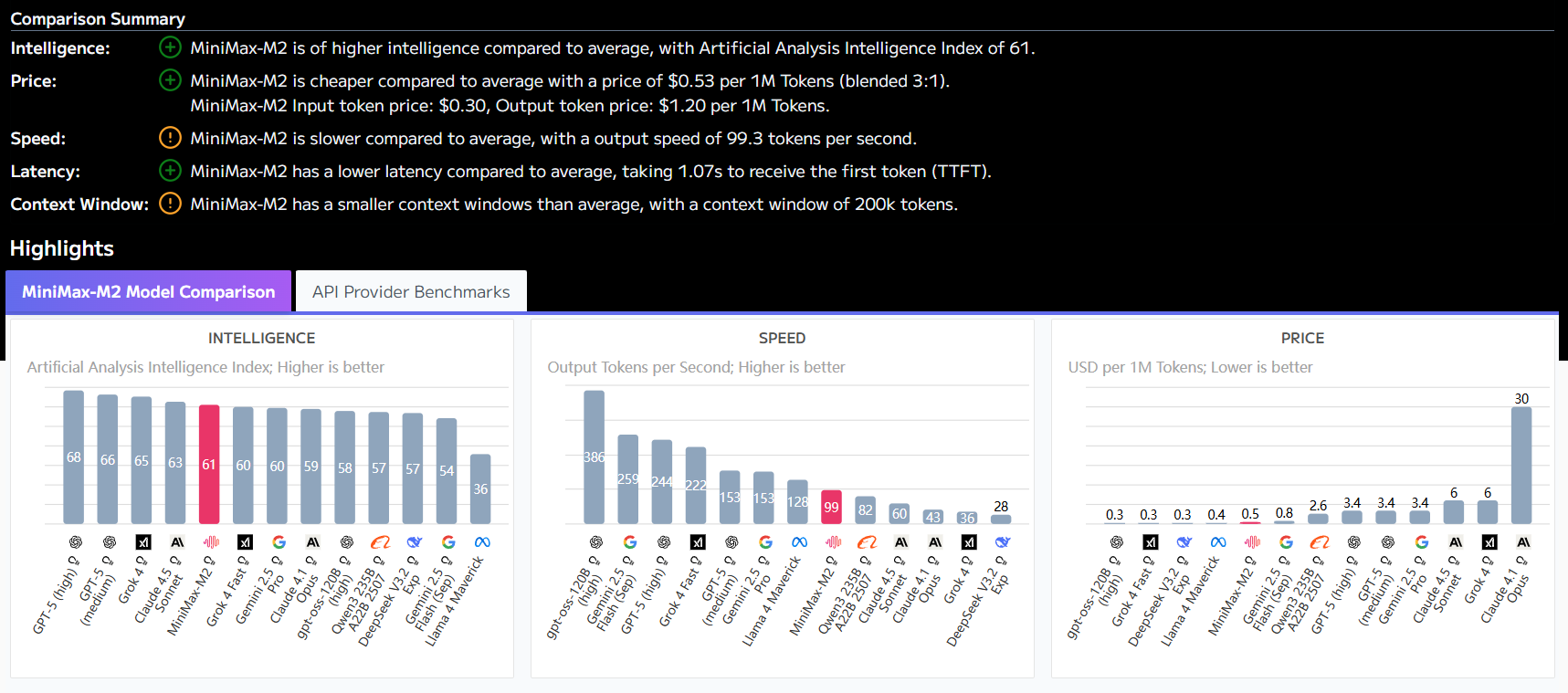

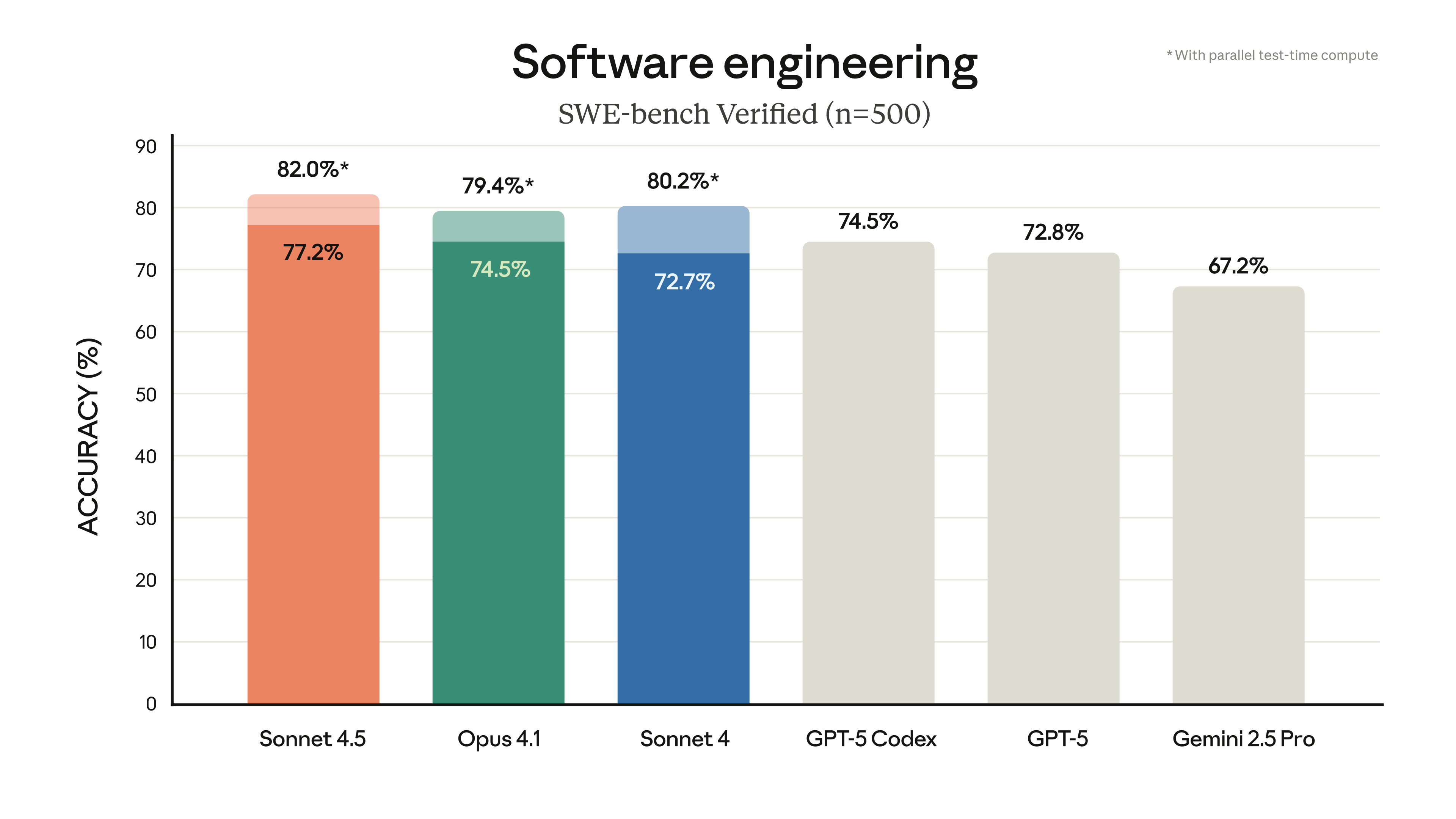

- Model Selection Strategy: Rationalize the choice of language models of different sizes based on task complexity.

Operating instructions

- Enable debug mode by setting SAGE_DEBUG=true in the .env file

- View token usage statistics through the monitoring panel of the web interface

- For non-critical tasks, smaller scale models are preferred

- Regularly analyze logs to optimize task reminder word design

Summary points

With Sage's built-in monitoring features and reasonable usage policies, token consumption can be effectively controlled to optimize the cost structure while ensuring task quality.

This answer comes from the articleSage: An Intelligent Multi-Agent Task Decomposition and Collaboration FrameworkThe