GPT Image 2 is a comprehensive AI visual creative platform that integrates image generation, editing and refining functions. It breaks down the operational barriers between generation and modification of traditional tools, and deeply integrates “Text-to-Image” and “Image-to-Image” technologies into a unified workflow. Users can not only quickly generate high-resolution visual assets with simple text prompts, but also upload reference images, sketches or product diagrams to achieve precise control over composition, style and subject consistency. Whether it's idea generation from scratch, or targeted remodeling and localized refining based on existing images, GPT Image 2 delivers fast, high-quality output. The platform's ability to follow complex cues is deeply optimized, ensuring that the resulting image is highly responsive to the initial idea in terms of light, shadow, mood and subject portrayal. For designers, marketing teams, and content creators who need to test ideas, create product presentations, social media materials, or concept artwork with high frequency, GPT Image 2 dramatically reduces the cycle time from conceptualization to final asset export, making it the ideal productivity tool for speed and professional-grade quality.

Function List

- Text to Image: Supports the generation of high-quality, high-resolution images directly from natural language cues, accurately capturing and rendering the subject, lighting, mood, and compositional details described in the cue.

- Image to Image (Ttu to Image): Allows the uploading of a base image as a substrate for controlled secondary creation and image transformation through AI technology, changing the style of the painting or specific environment while retaining the core skeleton of the original image.

- Reference-guided control (RGC): Support uploading visual inspiration drawings, product white background photos, line sketches, etc. as references to accurately guide the visual style and subject consistency generated by AI.

- High-precision local redrawing and patching (Precise edits): Provides precise mask image editing capabilities to modify, redraw or optimize specified local areas without destroying the original image globally, intelligently removing unwanted elements or adding new objects out of thin air.

- High-resolution asset export (High-resolution output)The built-in HD zoom algorithm directly generates and outputs clear and sharp HD images to meet the demands for professional commercial-grade image quality for product promotion, advertising and marketing, and presentations.

- Fast iteration workspace (FITS): Designed for rapid visual creation, it supports extremely fast testing of different versions of cue word combinations, improving undesirable drafts, and dramatically shortening the closed-loop cycle of creative validation.

Using Help

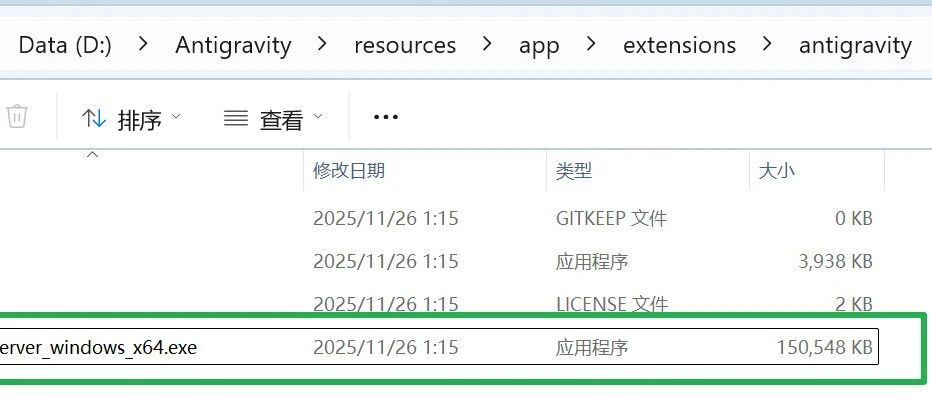

GPT Image 2 Detailed Installation and Operation Guide

GPT Image 2 utilizes a purely web-based, cloud-native architecture.Eliminates all the tedious process of local installation, configuration of the environment and download of the clientYou can do this on Windows, macOS, or mobile tablet devices. Whether it's Windows, macOS, or a mobile tablet device, all you need to do is open any major modern browser (such as Google Chrome, Microsoft Edge, or Safari) and type in the address bar https://gptimage-2.app/ Get direct access to and launch your AI creative workspace.

The following is a detailed process and tips for you to get started from zero to advanced professional drawing:

I. Platform preparation and interface awareness

- Account Registration and Login: After entering the website for the first time, click on the “Sign Up/Login” button in the upper right corner. You can use your e-mail address to register quickly. After logging in, the system will automatically assign you an exclusive personal workspace interface and unlock the corresponding generation quota.

- Workspace Layout Understanding: The interface is divided into three main core functional areas. The left or topToolbar and Parameter Settings Panel(for entering prompts and adjusting each generated value); the centralPreview and manipulate large canvases in real time(for applying masks, framing specific areas to be edited); the right or bottomGenerate History & Gallery Gallery(It is convenient for you to recall and trace past generated versions at any time for back-and-forth comparison or re-feeding into the editing process).

Second, the core function 1: text born map (Text to Image) zero base on the hand

Operating Scenarios: When you have an abstract concept or idea in mind, but don't have any reference images on hand, use this feature to visualize the text directly into a high-definition image.

- Building Effective Prompts (Prompt):

In the prompt word entry box, it is recommended to use “Description of the subject + Setting + Lighting + Atmosphere + Renderings of the painting style”Written in a first principles structure.

template: “A Shiba Inu wearing futuristic aviator goggles (the subject), sitting on a neon-filled cyberpunk street (the ambient backdrop), drizzling rain, cinematic backlighting (the light ambience), Unreal Engine 5 rendering, 8k resolution, and extremely high-detail photographic-grade realism (the pictorial rendering word).” - Setting the screen size and scale:

Find the “Aspect Ratio” option in the sidebar. Choose the most appropriate size for your final publishing platform, for example:1:1(Suitable for social media avatars or square matching images),16:9(Suitable for computer desktop wallpapers, video covers or PPT presentations),9:16(Suitable for mobile full-screen posters, short video content illustrations). - Quick generation and selection:

Click the “Generate” button and the cloud-based high-performance server will return multiple sketch variants with different compositions and details in seconds. You can save and download the most satisfying variant directly or send it to the next workflow for in-depth refinement with a single click.

Third, the core function 2: figure born figure (Image to Image) and reference map accurate guidance

Operating Scenarios: When you need to create something based on a rough draft, a line drawing, or a real product photo, or when you want to dramatically change the style of the original drawing but keep the outline of the subject intact.

- Upload reference base map:

Switch to the “Image to Image” module, click on the upload area, and drag in your local reference image (e.g., a hand-drawn pencil sketch of an apple, or an actual product shot with a messy background). - Adjustment of Denoising Strength:

This is the most critical parameter slider in the feature, which determines how much the AI “rewrites and deconstructs” the original image:- Low-amplitude redraw (0.15 - 0.35): AI makes only minor modifications and fine-tuning, such as automatically coloring solid-color line drawings and improving the quality of the drawing to remove noise, while the physical composition and line edges of the original image are almost completely preserved.

- Medium range redraw (0.40 - 0.65): A radical visual reimagining. the AI will strictly retain the basic shape and general layout position of the original image, but will completely change the object's materials, lighting effects and background environment.

- Large redraw (0.70 - 1.00): The original image is used only as an inspirational substrate for color distribution and blurred structures, and the AI will let loose and generate an almost entirely new picture.

- Targeted control with cue words:

Uploading a picture alone is not enough, it must be combined with precise textual cue words. For example, upload a photo of a plain white sneaker with the redraw intensity set to0.5The AI system will perfectly preserve the structural features of the shoe while completely replacing the boring white sole with a sci-fi Martian blockbuster scene.

IV. Core Function 3: Localized Repainting and Precise Edits (Inpainting & Precise Edits)

Operating Scenarios: You are satisfied with the overall atmosphere of the resulting image, but there is a flaw in one part of it (e.g., a character's fingers are misplaced, there are extra background elements that are not in harmony), or you need to add a new object out of thin air to a perfectly good picture.

- Activating the Mask Tool:

Click the “Edit/Redraw” function button in the image preview area. Use the mouse or touchpad to adjust the thickness of the brush to paint over the area of the image that needs to be modified, the painted area is usually displayed as a highlighted translucent color block. - Intelligent debris elimination (blemish removal):

If the goal is to remove unwanted items (e.g., erase a scene-stealing passerby in the background, remove unwanted logos from clothing), simply paint to cover that target area, leaving the cue word blank or simply typing it inbackgroundAI automatically calculates and resolves the texture of the surrounding environment, performing seamless pixel-level patch fills with edge blending that far surpasses the faux stamps of traditional retouching software. - Add new elements with precision:

If you want to add a brand new object to the blank area of the screen, please apply a mask of the corresponding size to the blank area first, and then enter the description of the object you want to add in the prompt box. For example, if you apply a mask to a blank area of a desk and type “a steaming latte”, the AI will accurately calculate the desk's physical perspective, light reflections, and ambient shadows, and create a cup of coffee out of thin air that blends perfectly with the original environment.

V. Best practices: complete workflow from creative conceptualization to export of high-definition commercial assets

In order to produce HD images that meet professional commercial delivery standards, it is recommended that you follow the standard “funnel” workflow below:

- Step 1: Quick Trial and Error and Concept Proofing -- Using a short core of cue words, without the need to limit high resolution, quickly generate a large number of small-sized sketches to verify that the compositional direction, color palette, and visual mood are correct.

- Step 2: Basic Composition Locking and Detail Enrichment -- Select the most promising sketch and send it to the “Graphics” module with one click as a reference base. At this point, enrich the texture and lighting details of the cue, and adjust the repainting amplitude at

0.3-0.5Between them, medium-resolution images are generated with a compact picture structure and correct light and shadow logic. - Step 3: Non-destructive local fine-tuning and refining -- Use Inpainting to carefully fix occasional logical fallacies generated by the AI (e.g., twisted joints, misshapen text) or to replace discordant background architecture to ensure that every detail of the image stands up to scrutiny.

- Step 4: Upscale and Final Delivery -- For confirmed finalized images, use the platform's built-in high-resolution zoom engine. the AI algorithm intelligently complements real-world micro-textures (e.g., pores in a person's skin, real fabric fiber weave, natural scratches on metal surfaces) while zooming in losslessly, forcing the image quality up to the stunning clarity of the 4K level. Click on “Export/Export”. Finally, click "Export/Download" to download the HD footage locally for direct use in social matrix marketing, offline HD poster printing or large-scale project presentations.

application scenario

- E-commerce and Product Marketing Packaging

E-commerce sellers and brand marketers can utilize the reference image guidance function to place ordinary commodity real white background images in various high-quality, storytelling and atmospheric scenarios, without the need to rent expensive real-life studios, to quickly generate high-converting product posters and detail page visual materials. - Conceptual art exploration and illustration pre-design

Digital illustrators and game art designers can quickly explore dozens of different color combinations, light and shadow renderings, and material effects in minutes by uploading early simple sketches or matchmaker line drawings, combined with the Tupelo feature and specific style cues, greatly accelerating the preliminary proof of concept and the dispersion of inspiration. - Social media operations and high-frequency advertising material production

New media operation and advertisement placement teams can respond to online hotspots at any time by using the text-to-graphics function, quickly generating matching graphics, advertisement banners and short video eye-catching covers that are fully in line with the topic's mood. Combined with the local redrawing function, specific details can be modified at any time in response to the feedback from the client, so as to maintain a very high frequency of content output and response speed. - Visualizing Workplace Presentations and Creative Proposals

When creating PPTs, business plans or project proposals, professionals can directly generate high-definition graphics that fit the project theme and have a uniform style by entering precise descriptors. This not only dramatically improves the professionalism of presentations, but also completely avoids the time-consuming problem of traditional gallery search and potential commercial image copyright disputes.

QA

- Do I need to download and install a computer client to use GPT Image 2?

Not at all.GPT Image 2 is a cloud-based online AI image generation and editing workspace platform. You can access and use the full functionality directly from a modern web browser (e.g. Chrome, Edge, Safari, etc.) on your computer or cell phone. All AI calculations are done on cloud servers, without taking up storage space on your local device or relying on your computer's graphics card power. - Are there any copyright restrictions on the generated images? Can they be used for direct commercial use?

Newly generated original images utilizing text via GPT Image 2 can often be used for personal commercial projects (e.g., product demos, advertising posters, article tie-ins, etc.). However, it is important to note that if you upload a copyrighted third-party image as a reference image, or if you explicitly request in the prompt to generate content featuring a specific brand logo and IP, there may be a risk of copyright infringement. For the specific commercial license scope and disclaimer, please refer to the latest released user service agreement of the platform. - When using the text born chart, why does the generated image not match the description of my cue word?

This is usually because the prompt is not specific enough, or the command structure is not clear enough. It is recommended that you use the structured formula of “subject description + environment background + lighting effect + painting style rendering” to write the cue words. In addition, GPT Image 2 has been deeply optimized for command adherence, so you can quickly iterate and correct the problem by adding detailed constraints, deleting ambiguous adjectives, or working with the “graphical” reference base map. - How to maintain consistency of a person's face or specific product features across multiple generated images?

To achieve a high degree of consistency, it is recommended that you make deep use of the platform's “Image to Image” or “Reference-guided control” features. Upload a high-resolution photo of the target person or product as a reference base, and lower the “Denoising Strength” in the parameter settings, so that when AI generates a new action or transforms a new scene for you, it will maximize the anchoring and retention of the core visual features of the original subject through the underlying algorithm. characteristics of the original subject will be maximally anchored and preserved by the underlying algorithm.