Memory Optimization Solution for Consumer Devices

Three solutions are recommended for memory limitation problems:

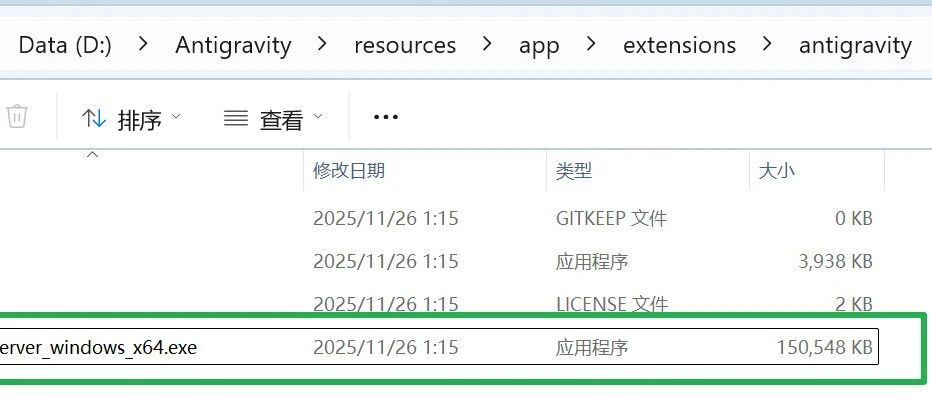

- Model Selection: Priority is given to the use of gpt-oss-20b (parameter 21B), which is passed through the

torch_dtype='auto'Automatically enables BF16 mixed precision, saving 50% memory compared to FP32 - Quantitative deployment: Use of the Ollama tool chain (

ollama pull gpt-oss:20b) Automatically applies GPTQ 4bit quantization to reduce video memory requirements from 16GB to 8GB - Layered loading: Configuration

device_map={'':0}Forces the use of the main GPU, in conjunction withoffload_folder='./offload'Swap unused layers to disk - parameter trimming: in

from_pretrained()Addlow_cpu_mem_usage=Truecap (a poem)torch_dtype='auto'parameters

For devices with only 8GB of video memory, additional enablement ofoptimize_model()Perform operator fusion to further reduce the memory footprint by about 151 TP3T.

This answer comes from the articleCollection of scripts and tutorials for fine-tuning OpenAI GPT OSS modelsThe