Lossless Claw is an open source Lossless Context Management (LCM) plugin for OpenClaw intelligences developed by Martian Engineering. Based on Voltropy's LCM research paper, it is designed to solve the problem of historical memory loss caused by the “sliding window” mechanism when intelligences are in long conversations or running overnight workflows. The plugin intercepts messages that are about to overflow the context window and permanently stores them intact in a local SQLite database; it also invokes a large model to extract the history as a hierarchical summary in the form of a directed acyclic graph (DAG). In subsequent sessions, the plugin combines the high-level summarization with recent messages so that the overall Token count is always kept within safe limits. In addition, it provides exclusive retrieval tools for the intelligences, allowing the AI to penetrate the summarization layer at any time to accurately recall and read the most initial conversation details, empowering the intelligences with true long-term memory capabilities.

Function List

- Full message local persistence: Say goodbye to sliding-window cropping and save every original message generated during a session in exact raw text in the local SQLite database completely and permanently, ensuring zero data loss for 100%.

- Automatic generation of DAG hierarchical summaries: Utilizing a user-configured large language model, older messages are automatically chunked and distilled into summaries. As messages pile up, the summaries are further condensed into a hierarchical structure of directed acyclic graphs (DAGs).

- Dynamic Context Intelligent Assembly: Automatically splicing structured historical summaries with recent raw messages before each round of conversation generation, maintaining a long-term memory pulse while strictly adhering to the current Token cap of the larger model.

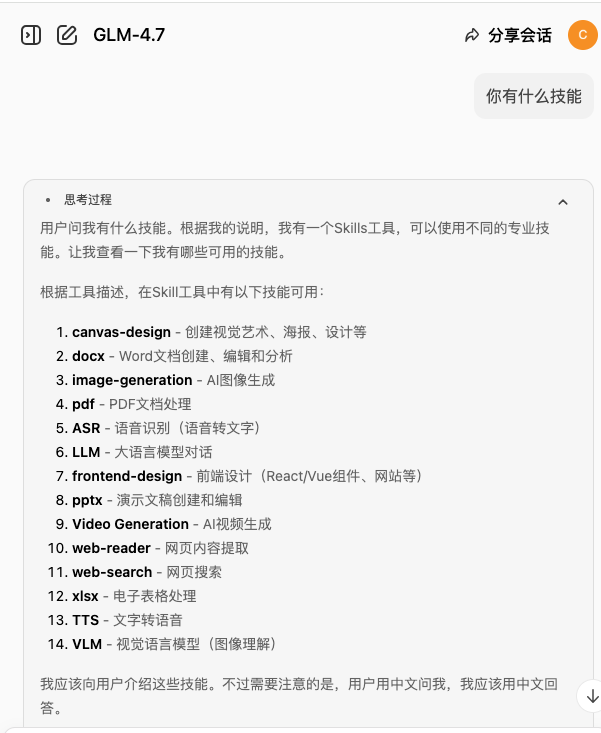

- Deep Detail Penetration and Callback Functionality: Built-in featured search toolbox (

lcm_grep、lcm_describe、lcm_expand), allowing the intelligences to trace and extract the most original history of conversations through summary links as if they were accessing an archive. - Flexible underlying large model configuration: Support for optimizing API call costs by independently assigning a different model or provider than the main dialog for the “Memory Summary” task, either through environment variables or plugin profiles.

Using Help

🚀 Core mechanism dissection: farewell to AI “selective amnesia”

Before diving into configuration and usage, it is essential to understand the underlying operational logic of Lossless Claw. When using AI intelligences such as OpenClaw, the number of contextual tokens rapidly approaches the physical limits of a large language model as conversations and tasks accumulate. By default, the system employs a “sliding window” strategy - when the window is full, the oldest messages are simply truncated and permanently discarded. This leads to the fact that if your intelligence runs all night, the next morning it will have forgotten all about the system configuration or core logic discussed the day before.

Lossless Claw fundamentally addresses this pain point by introducing the **Lossless Context Management (LCM)** architecture:

- Data Interception and Plate Falling: It intercepts old messages before they are discarded, writing them 100% intact to the local computer's SQLite database.

- Memory Mapping Construction: Quietly call the specified model in the background, package and distill old messages into summaries, and stack the summaries into a directed acyclic graph (DAG) structure.

- Memory PenetrationBecause the summaries are mapped to the original messages in the database, when the AI needs to recall a detail, it can use the built-in tools to follow the trail and “pull” the original data back into the current activation window.

🛠️ Installation Process: Accessing Lossless Memory from Scratch

1. Confirmation of the pre-operational environment

Before installing the plugin, be sure to check that your local workstation or cloud server meets the following base environment requirements:

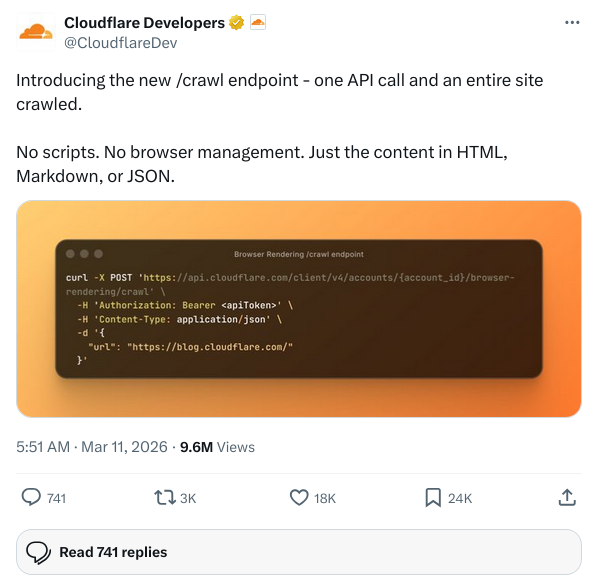

- Installed and stabilized OpenClawand the current version must support the Plugin Context Engine Support.

- Node.js is installed on the system and must be up to the version of 22 or above。

- At least one available underlying Large Language Model (LLM Provider) has been successfully configured within the OpenClaw system, which will subsequently serve as the arithmetic engine for summary generation.

2. Routine installations in production environments

We recommend using the official plugin installer that comes with OpenClaw for one-click integration. Open your terminal terminal or command line tool and enter the following command:

openclaw plugins install @martian-engineering/lossless-claw

Note: If you are currently executing OpenClaw locally by cloning the OpenClaw source code (Local Checkout), use the pnpm package manager to execute the corresponding command:

pnpm openclaw plugins install @martian-engineering/lossless-claw

3. Developer local debugging installation (optional)

If you intend to do secondary development of Lossless Claw's source code, or environment-specific adaptations, you can soft-link your local copy of the code into OpenClaw, which facilitates real-time hot updating of the code:

openclaw plugins install --link /path/to/lossless-claw

⚙️ Environment Configuration: Customizing Your Memory Compression Engine

The flexibility of Lossless Claw is that you can specify a model for the “summary generation” task alone. Since the background compression memories consume a lot of tokens, you can use the most powerful and expensive model for the main task (e.g., GPT-4o or Claude 3.5 Sonnet), and configure a more cost-effective model for the compression task (e.g., Gemini 1.5 Flash).

Model Fallback and Match Prioritization

For the Compaction memory (Compaction) task, the plug-in looks for model configuration parameters in the following strict order of priority:

- environment variable(highest priority, recommended configuration):

LCM_SUMMARY_MODEL/LCM_SUMMARY_PROVIDER。 - Plugin Profiles: The OpenClaw plugin JSON configuration item in the

summaryModel/summaryProviderFields. - OpenClaw Global Default Model: If not specified, the plugin will automatically fall back to invoke the default memory compression model configured by the OpenClaw system.

- Legacy single call parameters (Legacy hints)。

Configuration example: injecting environment variables

Taking the Linux/macOS environment as an example, you can inject the following environment variables in the terminal before starting the OpenClaw front-end or back-end service to let the summary task mount to the specified efficient model:

export LCM_SUMMARY_PROVIDER="google"

export LCM_SUMMARY_MODEL="gemini-1.5-flash"

openclaw start

🧠 Intelligent body-specific retrieval tools: how to enable cross-cycle recall?

Once the plugin is installed and activated, there is no need for you, as a human user, to perform cumbersome push-button operations on the interface; Lossless Claw manages the underlying data automatically, following the principle of “senseless operation”. But the core change is that the OpenClaw intelligence's library is automatically populated with three extremely powerful memory retrieval tools. In order to make the smart body perform better, you can prompt it in the project's initial Prompt to proactively invoke these tools when it encounters uncertain historical information:

- Global fuzzy search (

lcm_grep):

When an intelligence needs to recall a particular configuration parameter, an error log that was discussed, or a code snippet, it can invoke this tool to perform a precision or regular search across all historical raw messages within the SQLite database. - A quick look at the summary outline (

lcm_describe):

If the Intelligence needs to understand the macro progress of the project over the past few days, it can access the DAG summary topology of the current session through this tool. This allows the AI to quickly read the high-dimensional core pulse without consuming massive amounts of Token to read all the nonsense. - Detailed Penetration Expansion (

lcm_expand):

This is the killer feature of the Lossless Restore feature. When an intelligent body is in thelcm_describeIf you see a “Wednesday: Security Group Policies That Determine Network Architecture” node in the outline of the network architecture, and you happen to need these policy codes at the moment, the smart body simply calls thelcm_expandand pass in the system ID of that summary node. the Lossless Claw instantly pulls the original conversation transcript from the database for the day of Wednesday and inserts it seamlessly, word-for-word, into the intelligence's current working memory.

⚠️ Advanced Pitfall Prevention Guide: Session Revitalization and Lifecycle Management

A large number of novice users still complain after installing the plugin “How come my smart body still has amnesia?”. . Note that this is often not a malfunction of the plugin, but rather a trigger of OpenClaw's own Session Reset Policy (SRP)。

Lossless Claw is responsible for “lossless compression and preservation” of context in a single lengthy session, but it is itselfnot competentDe-interfere and block OpenClaw's global timeout interrupt rule. If your system is set to automatically clear a session and restart it after a short idle time, the memory map attached to the session will be blocked with it, and the new session will still start from zero.

The Ultimate Solution:

Be sure to modify OpenClaw's core configuration file to adjust the session lifecycle parameters:

- Find the Reset Mode setting and set the

session.reset.modeThe value of"idle"(i.e., based on idle time calculations, the session is never interrupted as long as it stays running). - substantial increase

session.reset.idleMinutesThe value of the parameter. This is an integer-type parameter measured in minutes. If you want the Intelligence to perform continuous follow-up tasks across days or even cycles, set it to a large enough number (e.g. set it to10080(This means that the system allows a silent idle period of up to a full week without disconnecting the session).

By following the above guide to set up your environment and configuration, your OpenClaw will be completely transformed into a powerful digital personal assistant that will not be afraid of the Token limit and will truly “never forget”.

application scenario

- Automated overnight workflow runs

When intelligent bodies are scheduled to handle massive log analysis, task distribution and progress tracking independently at night, the number of conversation rounds is extremely high. The plugin prevents intelligences from losing pre-commands due to full windows the following morning and ensures that the task logic is coherent without collapsing. - Long-term complex projects and code management

In carrying out software development spanning several weeks, intelligences need to memorize architectural designs, database table structures, and API specifications decided early on. The plugin allows the AI to accurately call back to the architectural specifications at any time during subsequent coding by dropping the full disk of historical designs and building a summary. - Ongoing customer support and interfacing

When utilizing OpenClaw to handle customer follow-up services, historical communication details, specific customer requests, and promises ever made are extremely important. The plugin allows AI to accurately trace the original communication details with a specific customer across thousands of conversation records.

QA

- Q: After using Lossless Claw, will the saving of history be leaked to an external server?

A: Not at all. All historical chats are permanently and compulsorily stored as plaintext raw data in a SQLite database on the local computer (or server) running the plugin. Except for the summary generation requests you configure that are sent to LLM, no full conversation data is transferred to any third-party cloud, greatly ensuring privacy and security. - Q: Why is it that I installed the plugin and the next day my Intelligence still lost its memory?

A: This is usually because OpenClaw's default session idle reset mechanism is triggered.Lossless Claw takes care of the memory compaction, but does not change the native session length limit. You need to go into the OpenClaw configuration and set thesession.reset.modeset to"idle"and significantly increasedsession.reset.idleMinutesThe value of the time (in minutes). - Q: Does running the summary generation mechanism incur additional API consumption?

A: It will. Since the plugin needs to invoke a large language model to transform and distill accumulated old messages into a Directed Acyclic Graph (DAG) node digest, this process will inevitably incur Token fees. It is recommended to configure a separate cost-effective model (e.g. Gemini 1.5 Flash or Claude 3 Haiku) for the summarization task via an environment variable to reduce the operational costs.