Hermes Agent is an open source autonomous AI intelligence with self-evolving capabilities and deep memory developed by Nous Research, a leading AI research organization. Unlike programming assistants that are limited to a specific IDE or shell chatbots based on a single model API, Hermes Agent is designed to be a “digital employee” that can run stably on your server, cloud host or local environment for a long time. It has an industry-leading learning loop built in: as it communicates and performs tasks on a daily basis, it automatically summarizes, extracts, and generates permanent skills from complex work experiences, optimizing itself for subsequent workflows; and it has a long-term memory system that proactively searches for historical conversations across sessions, refining its understanding of the user's personalized habits over time. personalized understanding of user habits over time.

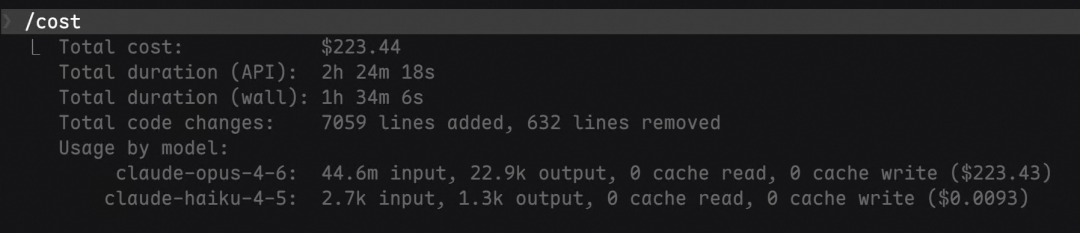

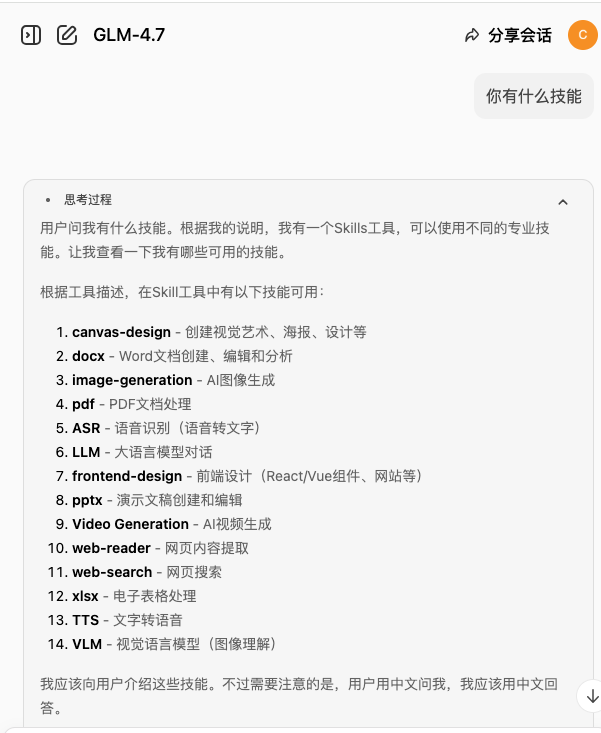

In terms of modeling underpinnings, Hermes Agent rejects ecological lock-in and comes with a powerful routing system. Users are able to use the Hermes Agent with zero code modification on OpenAI, Anthropic, DeepSeek, Qwen, Kimi, and other open source modeling platforms with more than 200 open source models. OpenRouter It can be used as a cross-platform messaging gateway with a single click. At the application level, it can be transformed into a cross-platform messaging gateway with a single click, accessing Telegram, Discord, Slack, Flybook, and enterprise WeChat at the same time, allowing users to give commands to the server from their cell phones anytime, anywhere. Combined with its built-in real sandbox isolation mechanism, in-depth browser manipulation capabilities, and original natural language scheduling system, Hermes Agent is able to securely accomplish all kinds of complicated web search, code troubleshooting, and O&M automation tasks.

Function List

- Automatic Learning and Skill EvolutionThe built-in self-evolutionary learning loop allows intelligences to automatically summarize their experiences and generate reusable skills after completing a complex multi-step task, and continuously optimize and improve the skills in subsequent calls.

- Cross-platform messaging gatewayThe powerful Gateway system is built-in, enabling seamless flow of cross-device conversations through Telegram, Discord, Slack, WhatsApp, Signal, Feishu, WeChat, and Email in a single deployment.

- Seamless switching between zero-code modelsCompatible with a large number of underlying big model service providers, support in the command line input command quickly switch to use OpenAI, Anthropic, DeepSeek, Hugging Face, Kimi or OpenRouter (200 + big models) and local private endpoints to deploy the model.

- natural language timed task schedulingBuilt-in powerful Cron automation system. It supports requesting the intelligent body to perform work regularly through daily conversations, such as “backup database at 12:00 every night” or “push industry information summary at 8:00 in the morning every day”, completely realizing unattended work.

- Secure Multi-Sandbox Code ExecutionProvides flexible Terminal Backend configuration, supporting the execution of system commands and Python scripts in multiple isolated environments, such as local host, Docker containers, remote SSH, Singularity and Modal, to ensure the security of the host system.

- Parallel Sub-Intelligence Body Delegated SchedulingFor extremely complex workflows, it supports the generation of isolated sub-intelligences for parallel processing, which have independent dialog contexts and operation terminals, greatly improving the efficiency of complex task execution.

- Comprehensive web browsing with multimodal control: Native web search, high-level browser automation (based on Playwright), visual image recognition and analysis, text-to-speech (TTS) conversion, and multimodal cross-model reasoning.

- MCP server seamlessly linked IDE: Supported by MCP (Model Context Protocol) server mode, directly interfacing with Cursor, VS Code, or Claude Desktop, to be the strongest external brain of the local development environment.

Using Help

Hermes Agent is a “companion growth” AI intelligence that runs in the background for a long time, and is extremely versatile and scalable. In order to allow you to fully utilize its unlimited potential, this guide will provide you with detailed operating procedures and step-by-step explanations from installation and deployment, service provider configuration, to the advanced use of core features, so that even novices can directly configure a set of autonomous intelligence system of their own.

I. System installation and environment preparation

Hermes Agent supports all major operating systems. It is officially recommended to be deployed on Linux, macOS, or any cloud VPS (even $5 a month for affordable hosting) in order to guarantee full terminal control.

1. Linux / macOS one-click installation process

If you are on a regular Unix-like system, open your system terminal and run the official one-click installer script below. The installer is very automated and will take care of the Python runtime environment, Node.js dependencies and the hermes core global commands are injected, you just need to make sure that the system has the git Ready to go:

curl -fsSL https://raw.githubusercontent.com/NousResearch/hermes-agent/main/scripts/install.sh | bash

After the script is executed, the terminal will indicate that the configuration write is complete. Be sure to reload your shell configuration file at this point so that the new system commands take effect immediately:

source ~/.bashrc # 如果您使用的是 Zsh,请运行 source ~/.zshrc

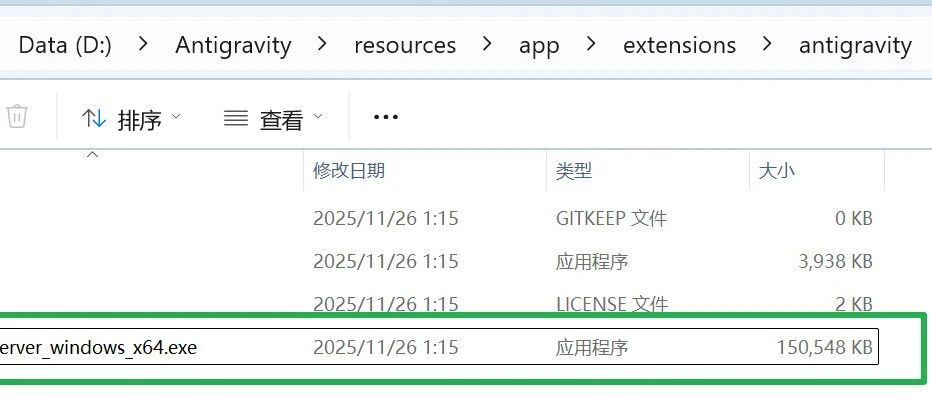

2. Installation instructions for Windows users

The project does not currently support running directly from the native Windows command line. Windows users must first enable and install WSL2 (Windows Subsystem for Linux). After installing Ubuntu from the Microsoft Store, open the Terminal application for that subsystem and run the Linux one-click install script as described in the first point above.

3. One-click deployment based on Docker containerization (recommended)

Docker deployments are highly recommended if you want to keep your server hosting environment absolutely clean, or if you need to run as a gateway resident in the cloud:

# 首先创建一个数据存储目录,用于持久化保存所有的 API 配置、历史记忆和进化技能

mkdir -p ~/.hermes

# 交互式启动容器,并进入系统的首次初始化配置向导

docker run -it --rm -v ~/.hermes:/opt/data nousresearch/hermes-agent setup

This command securely maps the smart's configuration file to the host's ~/.hermes directory. The beauty of this approach is that even if you later pull a new version of your system image for a cross-version upgrade, all of your session history and configuration files generated with the AI will never be lost.

II. Large Model Access and Functional Service Configuration (Provider Setup)

The core strength of Hermes is that it is not tied to any single model or cloud vendor. After installation, we need to assign the smart body its “smart brain”.

1. Interactive macromodel initialization configuration

Start the Visual Selection Wizard by entering the following core configuration commands in a successfully installed terminal:

hermes model

This command will list all supported model providers matrix in the terminal. You can select them directly using the keyboard arrow keys, and support includes OpenAI Codex, Anthropic (Claude), DeepSeek, Ali Tongyi Qianqian (Qwen), Kimi (Moonshot), MiniMax, and even OpenRouter (which integrates more than 200 high-quality open-source and closed-source models). numerous platforms.

After selecting your desired target service provider, the system interface will prompt you to enter the corresponding API key (API KEY). For example, if you select DeepSeek platform, just paste the key you applied in the open platform and press enter, all the underlying parameters and context length detection work system will be automatically taken care of.

2. Depth function switches and sandbox settings

If you need to control the advanced privileges of the smart body (e.g. whether to allow it to actively browse the web, whether to authorize it to read and write to the local file system, etc.), you can run the following command on the terminal at any time hermes tools command for switching individual modules on and off. Or use the hermes setup Go to the Global Settings page at any time to make global adjustments.

III. Operational guidance for core usage patterns

1. Command Line Interaction Immersion (CLI Chat)

For developers or users who love geeky operations, the most direct way to experience it is to wake it up directly at the terminal:

hermes

Running it takes you to a TUI (Terminal User Interface) that supports full-screen multi-line editing. It's not just a dialog box, it's an operating system level console. You can ask: “Write a script in Python that renames all the image files in the current directory and execute it directly”, and it will automatically generate code, issue system commands, and return feedback to you on the final execution results.

Advanced Interaction Techniques: Entering a Slash in a Dialog Box / to call up the full shortcut command menu; press the Ctrl+B The ability to directly call the computer microphone to open the voice real-time dialog recording; input /voice tts You can also have the intelligent body voice broadcast the results of the process for you.

2. Cross-platform social gateway deployment

Hermes Agent allows you to “deploy once and connect seamlessly across multiple devices”. You can turn it into a dedicated customer service or assistant and connect it to Telegram, Discord, Slack and other major IM programs.

As an example, the resident Docker Deployment Gateway can be used when you deploy a gateway via the hermes setup Telegram Bot filled in Token After that, simply execute the following daemon command:

docker run -d \

--name hermes \

--restart unless-stopped \

-v ~/.hermes:/opt/data \

nousresearch/hermes-agent gateway run

Once the container is launched, your very own super assistant is online 24 hours a day. You can send messages and tasks to it on Telegram on your cell phone anytime, anywhere. Since the underlying session state is all connected, half of the work you set up on your computer can be followed up in your cell phone's chat box on your way home from work.

Fourth, the core features of the scene practice

1. Cron Automations for Wake Up Call Natural Language

Whereas configuring timed automation tasks used to require developers to write unwieldy Crontab expressions and system scripts, the Hermes Agent simply sends it a piece of dialog text.

Practical cases: Say to it in the chat interface: “Please visit GitHub every night at 11pm to see if there are any new issues added to my repository today, extract the key content, and then summarize it into a Chinese report and send it to my Slack channel”. The system immediately understands the semantics of the time cycle and creates a persistent timed task in the background. You can use the /cron list command to view the list of running schedules.

2. Secure sandboxed environment for code execution (Sandboxed Backend)

Giving AI the authority to execute system commands carries the risk of a system crash, but with endpoint back-end isolation technology, it's all under control.

Hands-on Guide: Modify the configuration table config.yamlwill terminal.backend The value of the attribute is set to docker(Remote is of course an option) ssh or cloud sandbox modal). Thereafter, all package dependency downloads, risky code debugging, system command probing, etc. performed by the smart body will be completely boxed in a read-only Docker namespace sandbox with strict file resource constraints. If the task goes wrong and causes a system-level crash, it won't affect your real computer's files or operating system even a little bit.

3. Enable MCP server mode linkage to modern IDE tools

For professional programmers, the Intelligence can be made to run in MCP service mode through the backend. This mode allows it to expose the communication protocols of its internal storage context.

Hands-on Guide: After running the service locally, you can turn on cutting-edge AI programming tools! Cursor Or VS Code, add the Hermes Agent on your LAN as an MCP extension source. After that, you can let the artificial intelligence inside the IDE communicate directly with the omnipotent AI running in the background when you write code, realizing the ultimate office form of “multi-way big model, cross-layer intelligent joint work”.

application scenario

- 24/7 cross-site digital assistant with in-depth information retrieval

You can tie your server-resident Hermes deployment to your personal cell phone communication software, such as Telegram. When you're out and about without a computer, you can still send a voice request (e.g., “Help me search for today's latest industry reports on big model technology developments and create a short summary of the key points”) to your cell phone. Hermes will automatically invoke browser control in the cloud to crawl the web, filter ads, extract the body of the text, and then call up the selected big models for logical summarization, and finally send the structured information back to your mobile phone chat box. Then it calls up the selected big models for logical summarization and sends the structured message back to your mobile chat box. - Developer Code Debugging Assistance and Server Intelligence O&M

Operation and maintenance developers can send operation and maintenance instructions to the intelligent body directly through the system terminal or internal channels such as enterprise WeChat. With independent sandbox testing mechanisms and system shell operation privileges, developers can instruct Hermes to enter remote servers to troubleshoot error logs, redeploy and configure Nginx containers, analyze the source of memory leaks, and even pre-execute various system repair Python scripts in a secure, isolated environment and correct the errors on their own, acting as a senior “senior operation and maintenance engineer. Operations Engineer”. - Enterprise unattended data report processing and timed delivery automation

Cron scheduling combined with parallel sub-intelligence architecture makes the system ideal for enterprise middle and back office data aggregation. You can give a clear automation instruction to the intelligent body: “Every Friday at 4:00pm, automatically read the weekly report records of the project team on the Flying Book document, transform the data visualization charts, and distribute them to the heads of each department through email and Slack.” The intelligence then establishes a stable runtime stream in the background and utilizes an endogenous retry mechanism to ensure that the business is completed on time when network and other anomalies are encountered.

QA

- Is Hermes Agent, the powerful software, free? How will it be charged in the future?

The Hermes Agent system is completely open source based on the MIT protocol, and all functional modules are provided free of charge. However, since the thinking center of the system needs to access large-scale language models (such as configuring access to the OpenAI API, DeepSeek open platform or cloud computing nodes), the Token fees for this part of the API call need to be paid by you to the corresponding model service provider, and the system will not charge any difference in price and monthly fee. - If my main work computer is Windows, can I just double-click and install it?

Currently, the underlying system relies on a large number of native Unix ecosystem components, so it is not supported to run natively from the Windows Command Prompt (CMD / PowerShell) at this time. Windows users are advised to install WSL2 (Windows Subsystem for Linux) and install an Ubuntu environment, and then run the install script in a subsystem terminal to run seamlessly; alternatively, you can use Docker for containerized deployment. - Since it can actively execute scripts and commands, what if it accidentally runs code that deletes system files? How can security be guaranteed?

A powerful back-end execution isolation mechanism is built into the system to address such risks. All you need to do is set the execution configuration to be based on Docker, remote SSH, Singularity, or Modal platform sandbox. In this way, all the physical terminal operations and system commands of the intelligences are confined in an isolated container with restricted privileges, and even if the intelligences hallucinate the model and output error codes, they can never damage the system files of the host. - Is it easy to change the underlying big model at any time in the middle of use if I don't think a particular model is too smart?

One of the best features of Hermes Agent is that it rejects all system locking and code intrusion. You can always runhermes modelinstructions to seamlessly and instantly switch between a list of up to 200+ models without changing a single line of underlying code. Whether it's switching to a closed-source power model that handles logical reasoning or an open-source endpoint model that specializes in code writing, it can be done in a flash. - How does its “self-evolutionary cycle” manifest itself?

When an Intelligent Body assists you in completing a complex set of previously unprocessed tasks (e.g., collecting data from a company and converting it into a Markdown document in a specific format), its backend learning system actively summarizes the entire workflow and execution logic, and automatically generates a code-level Skill Library within the system. “The next time you issue a similar instruction. When you send similar instructions next time, it can directly bypass the trial and error stage, and directly and precisely call the learned skills, realizing the evolution of response speed and processing accuracy.