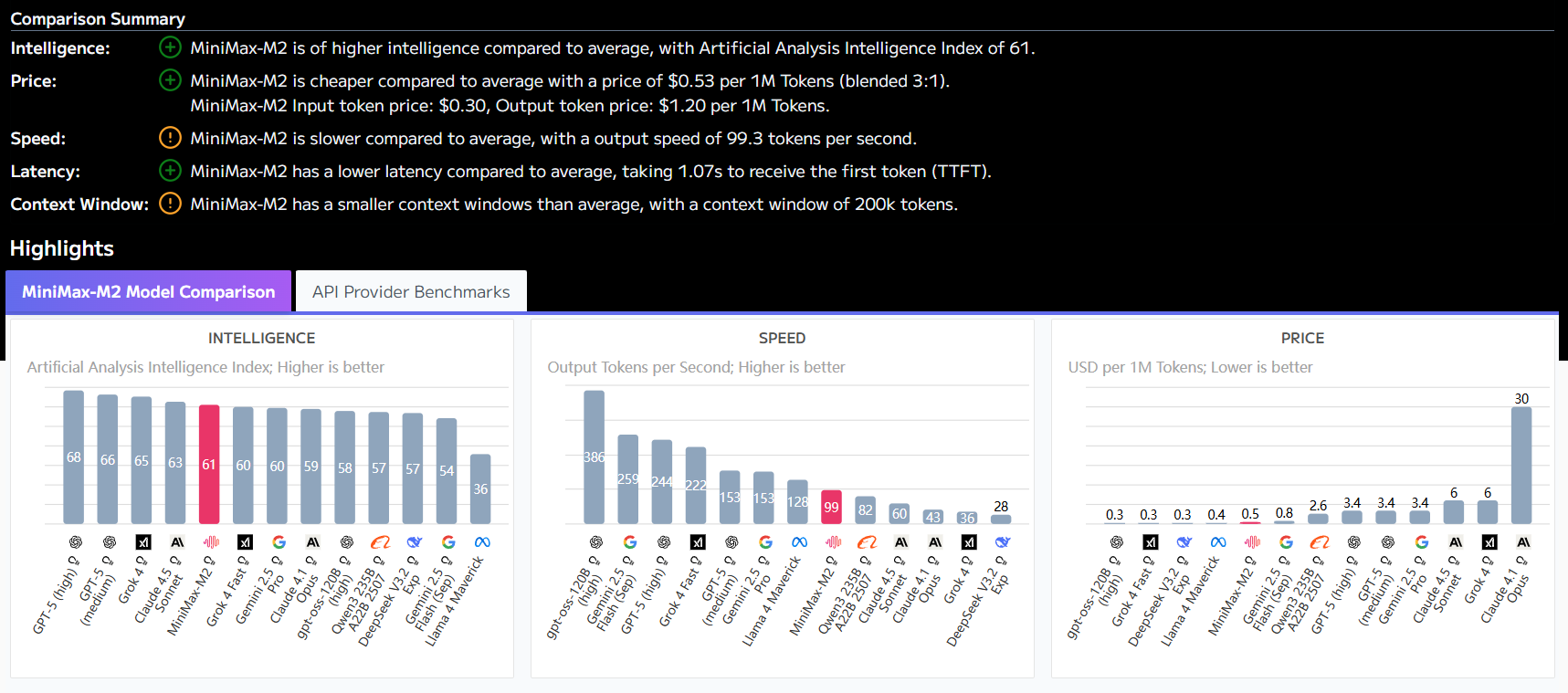

OpenAI has implemented stringent security measures for GPT-OSS, including a prudent alignment training process and a command prioritization system that effectively reduces hint injection and other security risks. The company also invested $500,000 in a Red Team Challenge to encourage security researchers to identify and report potential vulnerabilities. These security mechanisms ensure that the model maintains reliable behavioral performance in all types of application scenarios.

Of particular note, the security design of GPT-OSS also includes a protection mechanism for chained inference content to prevent harmful information from accidentally leaking through intermediate inference steps. Developers should follow OpenAI's security recommendations when deploying the model, especially when it comes to sensitive features such as tool calls and Python code execution, which require extra attention to potential security risks.

This answer comes from the articleGPT-OSS: OpenAI's Open Source Big Model for Efficient ReasoningThe