GLM-5V-Turbo is a native multimodal coding base model built by Z.ai for visual programming. In the Agent era, it breaks the limitations of traditional models with text-only inputs by deeply integrating visual and textual capabilities from the pre-training stage (using the next-generation CogViT visual coder and MTP architecture), and expanding the context window up to 200k. The model not only understands complex design drafts, web interfaces, videos, and document layouts, but also generates complete runnable code directly from them. In addition, GLM-5V-Turbo has powerful tool invocation and GUI manipulation capabilities, with native support for multimodal tools such as drawing frames, taking screenshots, and reading web pages. Claude Code, AutoClaw (Lobster) and other agent frameworks are deeply adapted. With the support of multi-task collaborative reinforcement learning, its plain text programming and reasoning ability does not degrade, and it truly realizes the complete closed loop of the intelligent body of “perceiving the environment→planning the action→executing the task”, which is the ideal cornerstone of all AI native applications.

Function List

- Native Multimodal Visual ProgrammingCogViT is a new-generation visual coder that accurately analyzes design sketches, high-resolution screenshots, and complex layouts, and directly outputs runnable HTML/CSS/JS, React, and other front-end engineering code.

- Plain text programming capability without lossThe company's 30+ tasks are collaborative reinforcement learning that introduces strong visual capabilities while ensuring that text-only capabilities such as back-end development, front-end refactoring, and repository exploration are not degraded.

- 200k large context window: Supports up to 200k Tokens The multimodal contextual input makes it easy to handle the task of analyzing entire books of very long graphical documents and refactoring huge code bases.

- Automated manipulation of real GUI environments: Leads in real GUI benchmarks such as AndroidWorld, WebVoyager, and more, and supports native multimodal search, frame drawing, screenshotting, and webpage reading Tool Use tool calls.

- Deep Collaboration with Mainstream Agent Frameworks: Native Deep Adaptation Claude Code With OpenClaw/AutoClaw (Lobster Agent), we put “eyes” on the intelligences, and significantly broaden the perception and execution boundaries of the Agent.

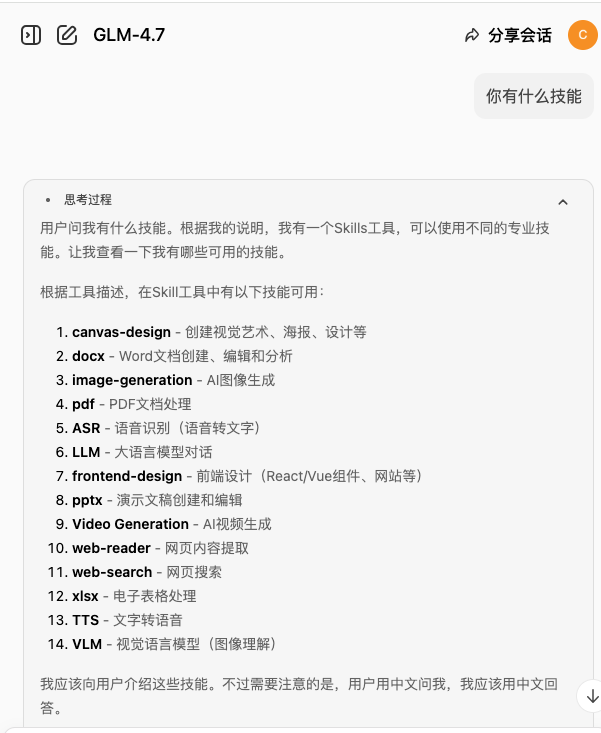

- Extensive official Skills librarySeamlessly interface with ClawHub for out-of-the-box image Captioning, visual Grounding, and linkage with GLM-OCR, GLM-Image for formula recognition and image generation.

Using Help

Welcome to the GLM-5V-Turbo, a base model focused on visual programming and multimodal agent workflows, which can be used for basic “look and write” as well as deep system-level autonomy. To ensure that you take full advantage of the model's 200k context and native multimodal fusion capabilities, please read the following extremely detailed operation and deployment guide.

I. Account Registration and SDK Environment Configuration

1. Get developer API credentials

Before using, please visit Z.ai Developer Open Platform or BigModel Open Platform (docs.bigmodel.cn/docs.z.ai) to register an account. After logging in to the console, go to “API Management” to create a brand new API Key, which is the only authentication credential for you to call GLM-5V-Turbo.

2. Install and update the official SDK

This model strongly recommends using the latest Python SDK to support rich multimodal toolchain incoming. Please run the following command in your terminal:

pip install zhipuai --upgrade

Note: Make sure the Python version 3.8。

II. Core Practical: Image as Code (Front-end Visual Programming)

This is the strength of GLM-5V-Turbo, the model can “see the picture, write the code”, and realize the restoration from the design to the complete front-end project.

1. Foundation reduction (Figma/screenshots to code)

You can pass UI screenshots or hand-drawn sketches to the model as Base64 or URLs.

from zhipuai import ZhipuAI

client = ZhipuAI(api_key="您的API_KEY")

response = client.chat.completions.create(

model="glm-5v-turbo",

messages=[

{

"role": "user",

"content":[

{"type": "text", "text": "请扮演资深前端工程师。解析这张UI设计稿的布局、配色、组件层级与交互逻辑,使用 React + TailwindCSS 生成完整可运行的代码,准确还原动效与视觉细节。"},

{"type": "image_url", "image_url": {"url": "https://example.com/design.png"}}

]

}

],

max_tokens=8192,

temperature=0.1 # 建议调低温度以保证代码逻辑的严密性

)

print(response.choices[0].message.content)

2. Interactive visual editing

After generating the first version of the code, you can take a screenshot of the currently rendered web page and add a text instruction (e.g., “Change the top navigation bar to a darker mode and add a pop-up confirmation interaction for the submit button in the bottom right corner”), and the model will pinpoint and modify the corresponding code block based on the new screenshot and historical context.

Advanced Practice: Putting Eyes on Agent (GUI Autonomous Exploration and Replication)

GLM-5V-Turbo injects Agentic meta-capabilities from pre-training, and is deeply adapted to Claude Code and the AutoClaw framework.

1. Access to the Claude Code framework for site replication

You can point to GLM-5V-Turbo's API in the underlying model configuration of the Claude Code framework, and when you're done, simply give the high-level command: “Go explore example.com, learn about its structure, and generate replica code”.

At this point, the model utilizes its powerfulMultimodal toolchain:

- Calling the [Screenshot Reading Web Page] tool: Get a live screen of the site.

- Calling the [Visual Grounding / Frame] tool: Recognize clickable elements in the screen.

- Action Execution: The model returns click jump commands, navigates through pages and sorts out page jump relationships.

- Final summaryThe model integrates all the visual material and interaction details it “sees” to generate complex front-end engineering code containing multiple pages at once, utilizing a very long context window of 200k.

2. AutoClaw: automated analysis of financial data

If you use AutoClaw, the model can be used as its powerful visualization engine. Take the example of Skill, the “stock analyzer”:

- Procedure: In the AutoClaw console, switch the large model to the

GLM-5V-Turbo。 - Set the task: “Help me analyze today's stock price of such-and-such company and generate a professional analysis report”.

- Model execution: The model will automatically go to major financial websites or terminals to capture K-line charts, valuation range charts, and screenshots of brokerage firms“ research reports with complex charts. Relying on the new generation of CogViT visual encoder, the model can ”read" the K-line trend and chart data like a human analyst, perform 60 seconds of parallel acquisition, and ultimately output professional analysis PPTs or research reports with illustrations and text.

IV. Integration and use of the official skills library (ClawHub Skills)

In order to extend multimodal sensing capabilities to a wider range of scenarios, Smart Spectrum has developed a new system at ClawHub (clawhub.ai) provides a full set of official Skills right out of the box.

Inventory of core competencies:

- GLM-OCR Linkage: In the face of challenging scanned scientific documents, OCR skills are called upon to accurately recognize handwriting, complex mathematical formulas, and cross-page tables.

- Image Captioning and Visual Grounding: Lets the model return specific pixel-level coordinates of specific elements in the screen, ideal for automating RPA processes (e.g. automating tapping on a phone screen).

- Multimodal Search and Depth Studies: Combine networking tools to collect web content containing accompanying images across the web for a specific topic and summarize it in depth using long context capabilities.

Installation and Calling Methods:

Developers can go to GitHub (github.com/zai-org/GLM-skills) pulls the corresponding Skill source code and registers it as a standard Python Function via the tools Parameters are passed directly into the GLM-5V-Turbo request body, and the model decides when to invoke these powerful peripheral tools.

V. Performance Optimization and Considerations

- Token Calculation and Interception: Since the image input will take up a certain amount of Context Token, in the Long-horizon multi-round interaction GUI Agent task, it is recommended to compare the difference screenshots on the client side and send only the changed page area to further optimize the utilization of the 200k capacity and the call cost.

- System Prompt Settings: In Agentic tasks, it is recommended to explicitly specify their identity and output format (e.g., a specific JSON action format) in the System Prompt, and the collaborative reinforcement learning nature of the model ensures a very high degree of data format compliance.

application scenario

- Image-as-code with automatic front-end replication

Scenario Description: Developers provide sketches, Figma designs, or screenshots of reference websites, and the model accurately analyzes component hierarchies, layout, and interaction logic by virtue of its strong visual and code comprehension capabilities, generating high-quality, directly runnable front-end project code with a single click to exponentially improve development efficiency. - GUI Auto-Exploration & Site-wide Replication

Scenario Description: Combined with Claude Code and other intelligent body frameworks, the model browses the target website autonomously like a real user through the closed loop of “screenshot perception→frame analysis→planning click→execution exploration”, sorts out the relationship between the page jumps and collects the details of the visual interactions, and then outputs the complex engineering code for restoring the whole site. - Interpretation of complex charts and generation of specialized financial research reports

Scenario Description: Relying on its powerful multi-modal long text processing capability, after accessing AutoClaw, the model can independently query and “understand” multi-source financial image data including K-line trends, financial charts, brokerage firms' evaluation, and then analyze and write high-quality, in-depth research reports interlaced with graphs and texts in parallel. - Intelligent Body Automation Execution (RPA) and Automated Testing

Scenario Description: In AndroidWorld and other mobile or Web desktop testing environments, the model does not need to rely on the underlying source code, but directly “looks” at the screen, using visual Grounding capabilities to identify interactive elements and give the coordinates of the operation, to realize the difficult black-box automation testing and cross-software RPA. Business Operation.

QA

- Does GLM-5V-Turbo's original text-only programming and logical reasoning capabilities degrade with the introduction of visual capabilities?

A: There is no degradation. In the post-training phase, GLM-5V-Turbo employs collaborative reinforcement learning (RL) across more than 30 task types covering sub-domains such as STEM, video, GUI Agent, and more. This ensures that while having top-notch visual capabilities, the model's performance on back-end development, front-end authoring, and plain-text codebase exploration (benchmarks such as CC-Bench-V2) remains industry-leading, effectively mitigating the instability of single-domain training. - What native multimodal tool uses does GLM-5V-Turbo support?

A: In addition to regular text tool calls, GLM-5V-Turbo natively adds multimodal tool chains such as multimodal search, drawing box (Bounding Box), screenshot analysis, and reading web pages for the perception and action chain, which greatly expands the operation space of the model in visual interaction scenarios. - What exactly does the model mean by “deep adaptation of Claude Code and Lobster Agent”?

A: This means that the model is specialized for the current mainstream intelligence frameworks from the underlying data (e.g., introducing GUI Agent PRM data to reduce the illusion) and interface level. When accessing AutoClaw (Lobster) or Claude Code, the model can perfectly execute the closed loop of “reading the current environment → planning the next action → executing the task (calling click or entering code)”, directly installing intelligent “eyes” for the Agent. directly put intelligent "eyes" on the agent. - Can GLM-5V-Turbo handle extremely long multimodal scientific papers or large code bases?

A: Yes. GLM-5V-Turbo's context window is dramatically extended to 200k. it can read dozens of pages of richly illustrated literature in a single conversation, or read in very large code repository files, and perform precise multimodal information retrieval and logic reconstruction in very long contexts.